Title

Create new category

Edit page index title

Edit category

Edit link

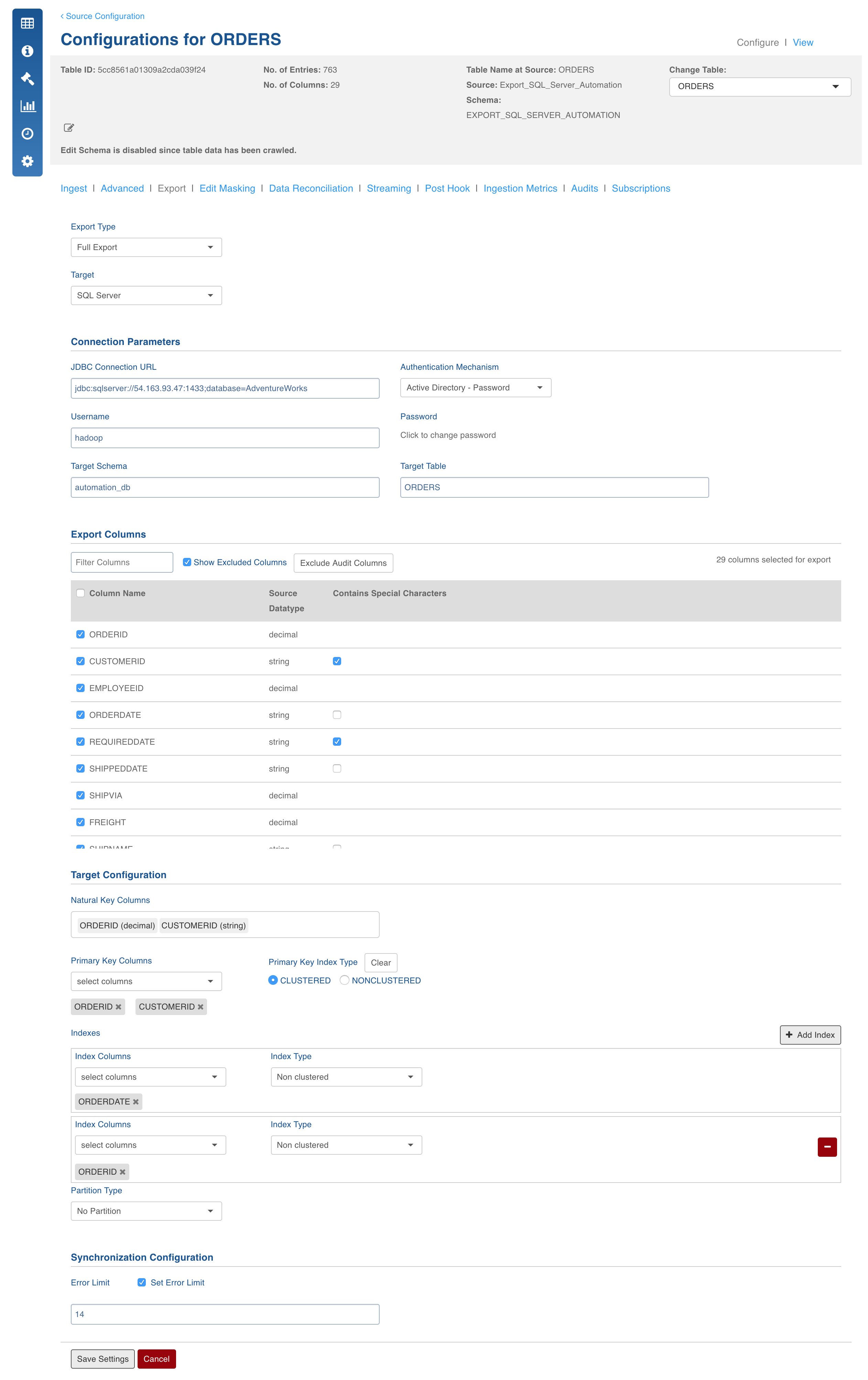

SQL Server Export

This feature exports the data from Hive to SQL server database.

Prerequisites

For SQL Server export feature to work, a source should be created and data should have been ingested. You must also install BCP. Refer to the installation procedure from Microsoft for your specific version of linux.

After installing BCP, ensure to restart Hangman before submitting an export job using the following commands:

$IW_HOME/bin/stop.sh hangman

$IW_HOME/bin/start.sh hangman

Field Description

- Export Type: Select Full Export/Incremental Export into SQL Server database.

- Target Database: The database type you want to export to (SQL Server).

- JDBC Connection URL: The connection URL needed to connect to the target database.

- Authentication Mechanism: The authentication mechanism used to connect to SQL server. The options include SQL Server Authentication and Active Directory-Password.

NOTE: When using Azure SQL Data Warehouse with Active Directory - Password authentication for export, ensure to add the $IW_HOME/lib/sqlserver_az_ad/ path to iw_export_classpath in the $IW_HOME/conf/conf.properties file.

- Username: Username of the target database.

- Password: Password of the target database.

- Target Schema: The schema in which you want the target table to be created.

- Target Table: Name of the target table.

If the table already exists in the SQL server database, the system checks if the same table is usable (compatible) for export based on configurations like column names and types, primary indexes, partitions etc:

- If the table is usable for export, the system creates a temp table, exports the data, and transfers the data to the existing table. This also ensures that the actual table can be used by users while export is happening.

- If the table in the SQL server database is not compatible, the system deletes the existing data based on admin configuration variable (that is, if full_export_overwrite_table=true).

- Export Columns: The selections of columns that must be exported to the target database. You must select at least one column. You can use the "Select all" and "Deselect All" buttons to make the selection faster.

- Natural Key Columns: Natural Key Columns are the columns that help determine a row uniquely. Primary Index and Partition Column must be a part of the Natural Key Columns for export to SQL Server.

- Primary Key Columns: A primary key column is a column in a base table that must contain only unique and not null values. Select Clustered or Nonclustered index type as required. A clustered index reorders the way records in the table are physically stored. A nonclustered index is the one in which the logical order of the index does not match the physically stored order of the rows on disk.

- Index Columns: Select the required index columns.

NOTE: You can add multiple indexes. However, make sure that a table has only one clustered index.

- Partition Type: Select Range_N or No Partition. SQL server supports range partitioning on numeric, date, and character types. For numeric and date types, provide the start, end, and interval values.

For Character types, provide comma separated list of strings. For example, 'abc','pqr','xyz' (Strings in single quotes)002E

- Set Error Limit: If this checkbox is checked, you can specify an error limit.

- Error Limit: The limit on the numbers of rows that go to error table, before the job fails.

NOTE: To perform an incremental export, follow all the steps above, but select Incremental Export in the Export Type drop down and also mention the Staging Schema as shown below and click Save Settings.

Advanced Configurations

Following are the admin configurations that you must set for a successful SQL Server export:

- FULL_EXPORT_OVERWRITE_TABLE: The configuration when set to true, deletes the data from the target table and exports the data, retaining the target table schema. This configuration is used only for indexed tables. For non indexed tables, the target table is dropped, a new table is created, and the data is exported to the new target table.

- FULL_EXPORT_CREATE_STAGING_TABLE_KEY: To create a temp table for full export, set this value to true. The default value is false.

- bcp_temp_data_path: The path to store the temporary delimited files that are used as input for BCP. The default value is $IW_HOME/bcp/data.

- bcp_crawl_separator: The field delimiter for the temp delimited file, which is the input file for bcp.

- bcp_row_delimiter: The row delimiter for the csv file generated. Please make sure that "\n" is part of the delimiter.

- BCP_POOL_SIZE: The configuration key to parallelize the data transfer to SQL Server, to export the data faster.

Known Issues

- "\n" in data is not supported as of now.

- The supported encoding is latin9 (char datatype).

- Only single character delimiters are supported, which can be changed by the configuration bcp_crawl_separator.

- SSL encryption is not supported as of now.

- Order for indexes cannot be specified right now. It is determined by the order that is already there in the Hive table.

- Currently, Incremental Export after the first export with zero rows in not supported. In such situation, please perform a full export once there is some data in the Hive table; post which, you can perform incremental exports.

For more details, refer to our Knowledge Base and Best Practices!

For help, contact our support team!

(C) 2015-2022 Infoworks.io, Inc. and Confidential