Title

Create new category

Edit page index title

Edit category

Edit link

Launching and Configuring Infoworks DataFoundry

Perform the following steps to launch and configure Infoworks DataFoundry

- Select Infoworks DataFoundry from the Google Cloud Platform Marketplace console

- Click LAUNCH. The following screen is displayed.

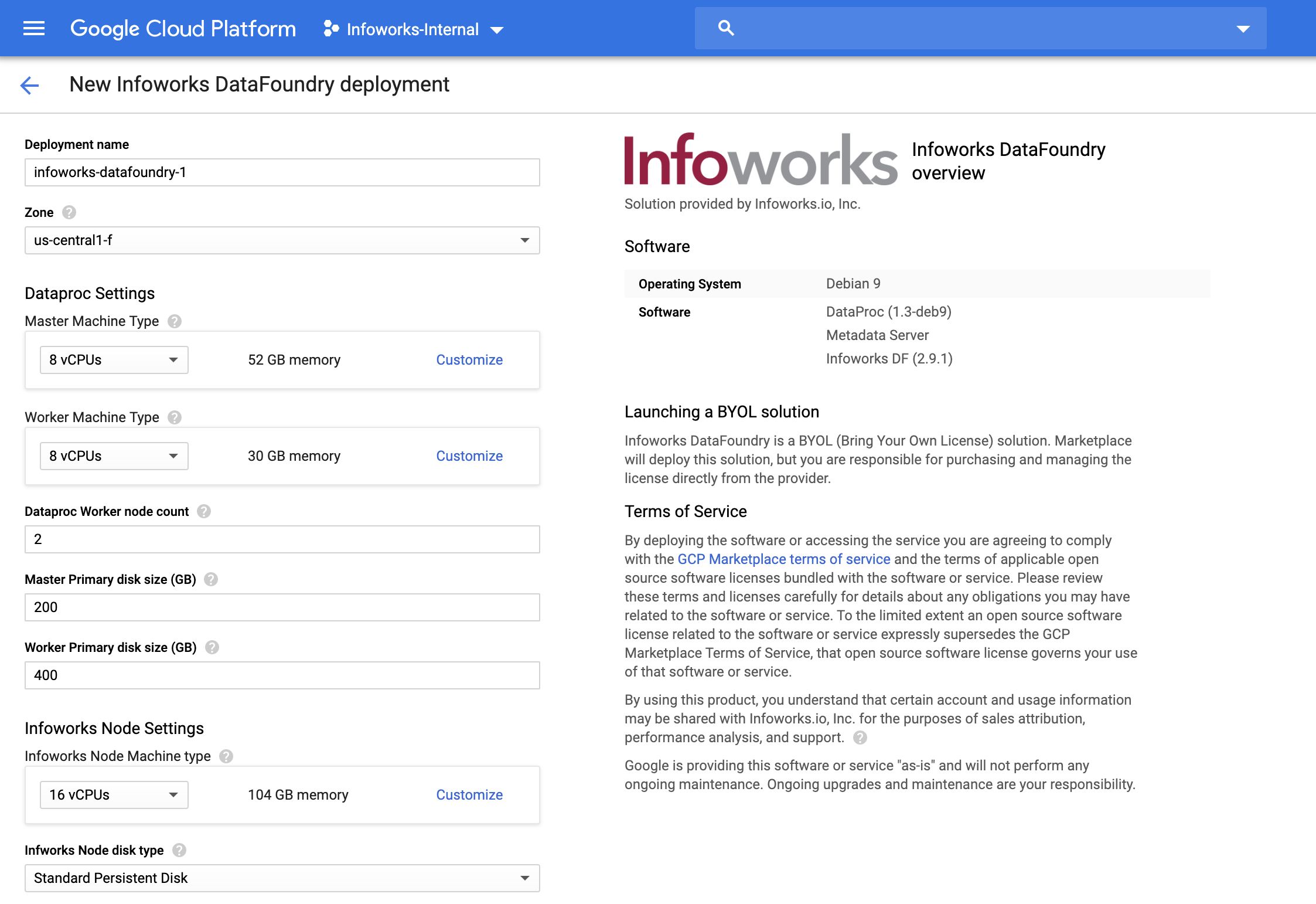

Deploying Infoworks Autonomous Data Engine

Configure the settings for the following machines:

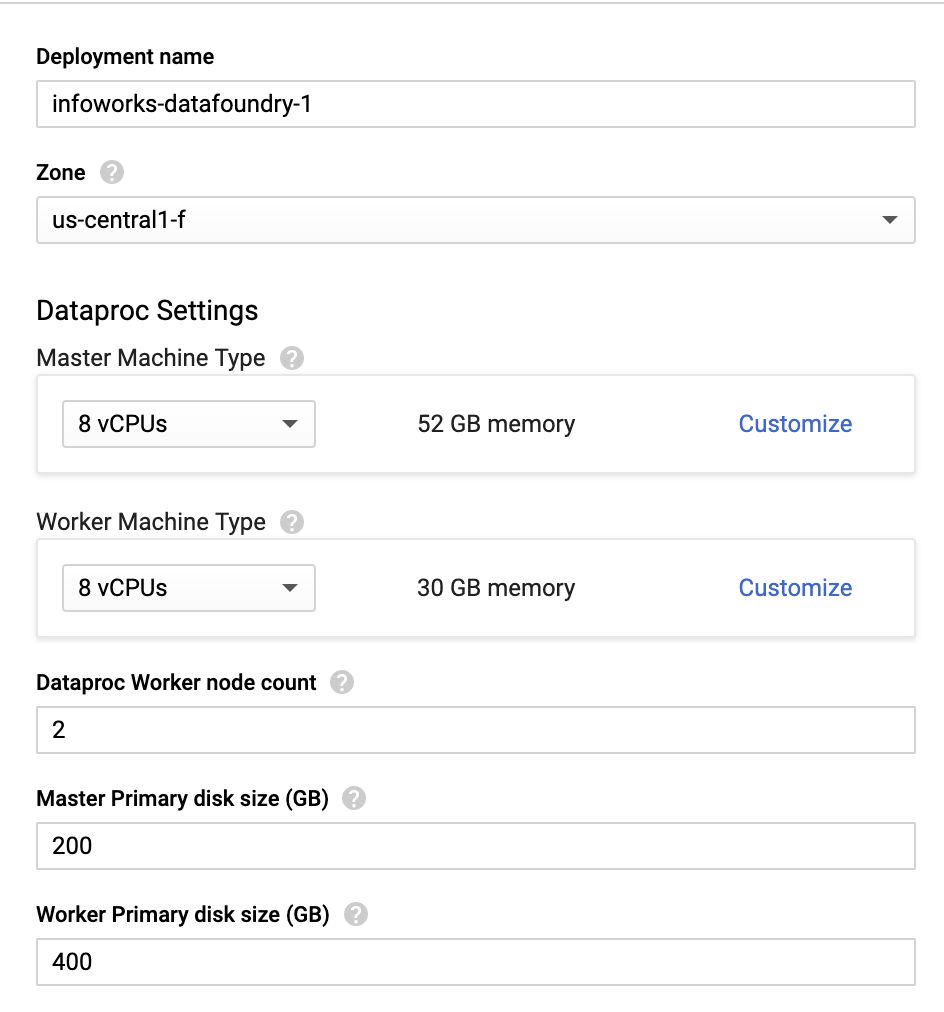

- Deployment name - Enter the name for your deployment instance.

- Zone - The zone of the Dataproc hadoop cluster.

Dataproc Settings: Allows configuring the Dataproc hadoop cluster is run.

- Master Machine Type - This machine type determines the specifications of your machines such as the amount of memory and virtual cores that a Dataproc master instance will have. Master services run here.

- Worker Machine Type - This machine type determines the specifications of your machines such as the amount of memory and virtual cores that a Dataproc worker instance will have. Worker services run here.

- Dataproc Worker node count - Number of Dataproc worker nodes in the cluster. Minimum value must be 2.

- Master Primary disk size (GB) - Size of the user's Dataproc Master disk.

- Worker Primary disk size (GB) - Size of the user's Dataproc Worker disk.

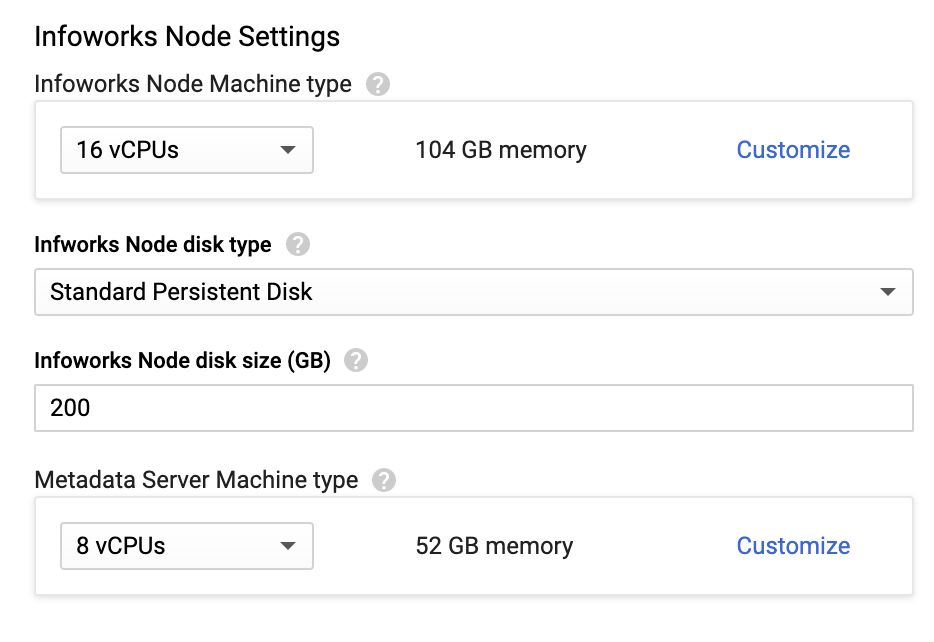

Infoworks Node Settings

- Infoworks Node Machine Type- This machine type determines the CPU and memory specifications of your Infoworks DataFoundry instance.

- Infoworks Node Disk Type - This is the disk type of your Infoworks edge node. The valid values are SSD or Standard.

- Infoworks Node Disk Size - This is the disk size of your Infoworks edge node.

- Metadata Server Machine Type - This machine type determines the CPU and memory of your metadata server instance.

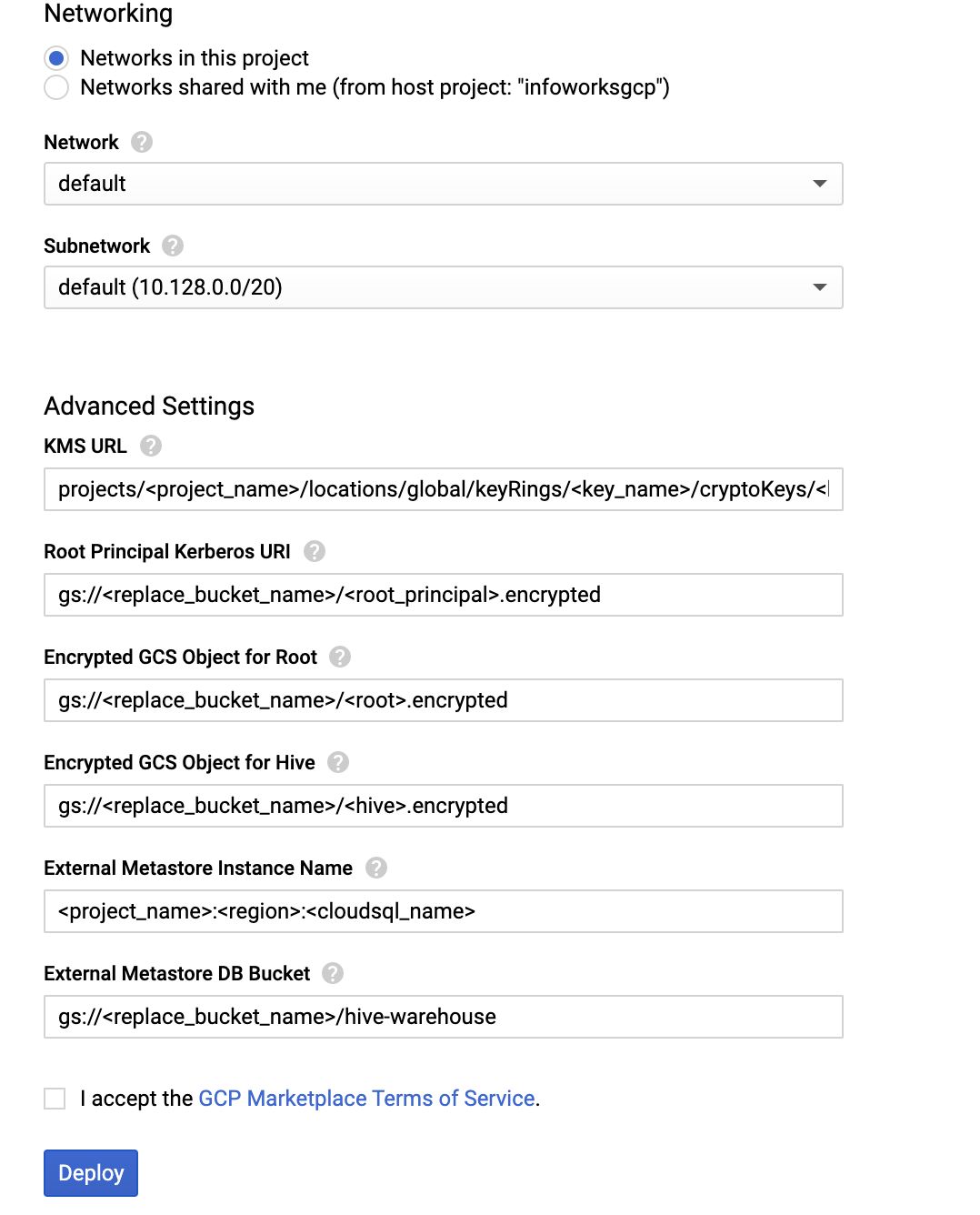

Networking

- Networking - Radio button which allows you to choose between dedicated network within the project, or a shared network from a different project.

- Network - This network determines the network traffic the instance can access. This is the name of your Virtual Private Cloud (VPC) network.

- Subnetwork - This range assigns your instance an IPv4 address. Instances in different subnetworks can communicate with each other using their internal IPs as long as they belong to the same network.

Advanced Settings

- KMS URL - This is the URL of Key Management Service(KMS) of the keyring. This is the value of the output from Step 8 of the Prerequisites section.

- Root Principal Kerberos URI - This is the GCS bucket object of the encrypted password for Kerberos. This is the Google Cloud Storage bucket location where the encrypted password file copied in Step 12.a of the Prerequisites section is placed.

- Encrypted GCS Object for Root - This is the GCS bucket object of the encrypted password for MySQL root user. This is the Google Cloud Storage bucket location where the encrypted password file copied in Step 12.b of the Prerequisites section is placed.

- Encrypted GCS Object for Hive - This is the GCS bucket object of encrypted password for MySQL Hive user. This is the Google Cloud Storage bucket location where the encrypted password file copied in Step 12.c of the Prerequisites section is placed.

- External Metastore Instance Name - This is the external metastore instance connection name from Cloud SQL created in Step 13.4 of the Prerequisites section. Example: <project-name>:<region>:<cloudsql-name>

- External Metastore DB Bucket - This is the location where the external metastore DB stores the Hive Metadata. Example: gs://<replace-bucket-name>/hive-warehouse

NOTE: The Dataproc cluster might require significant resources and extension of quota for the zone being used for the deployment.

- Agree the GCP Marketplace terms of service, and then click Deploy.

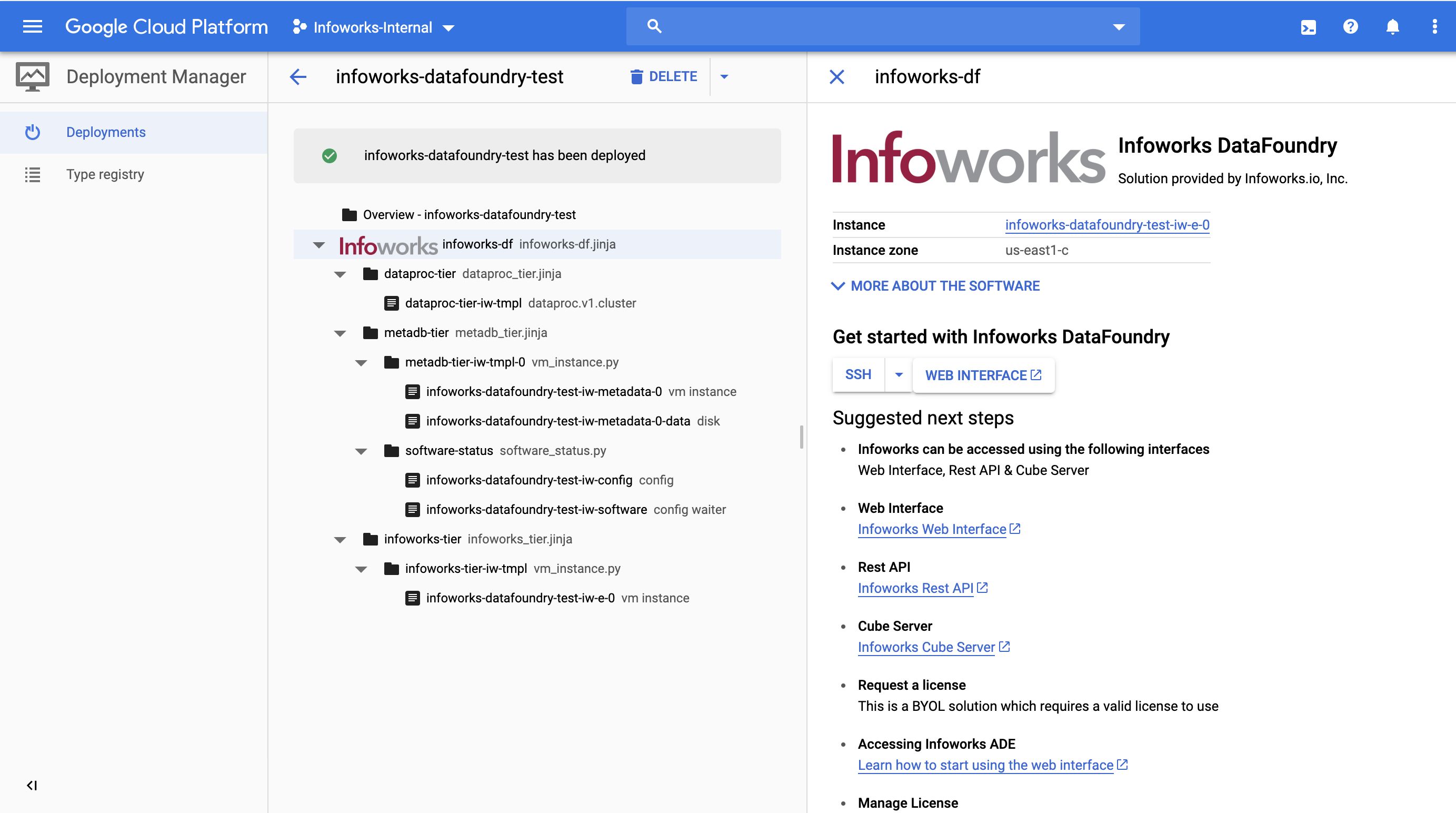

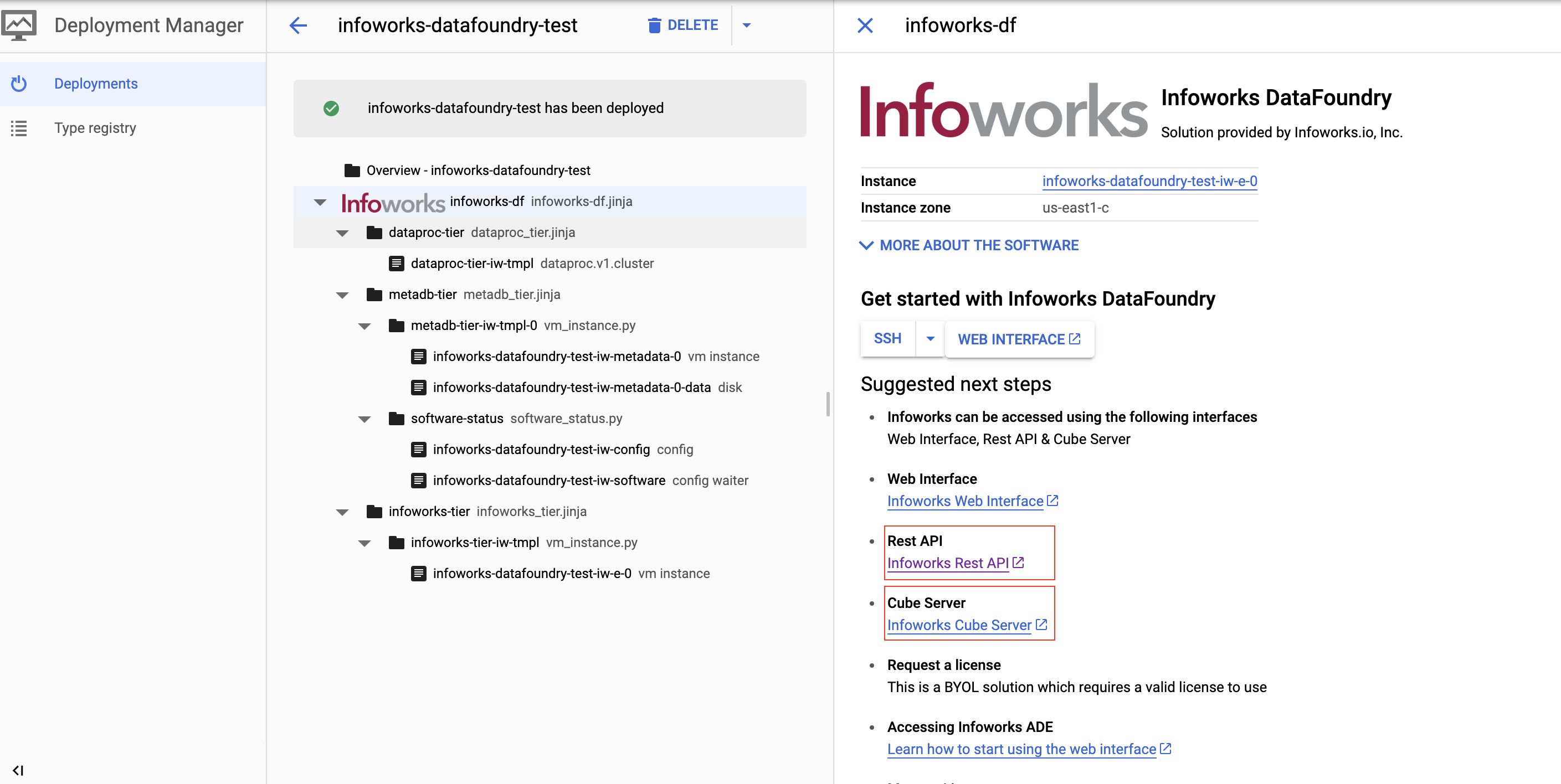

The following page is displayed.

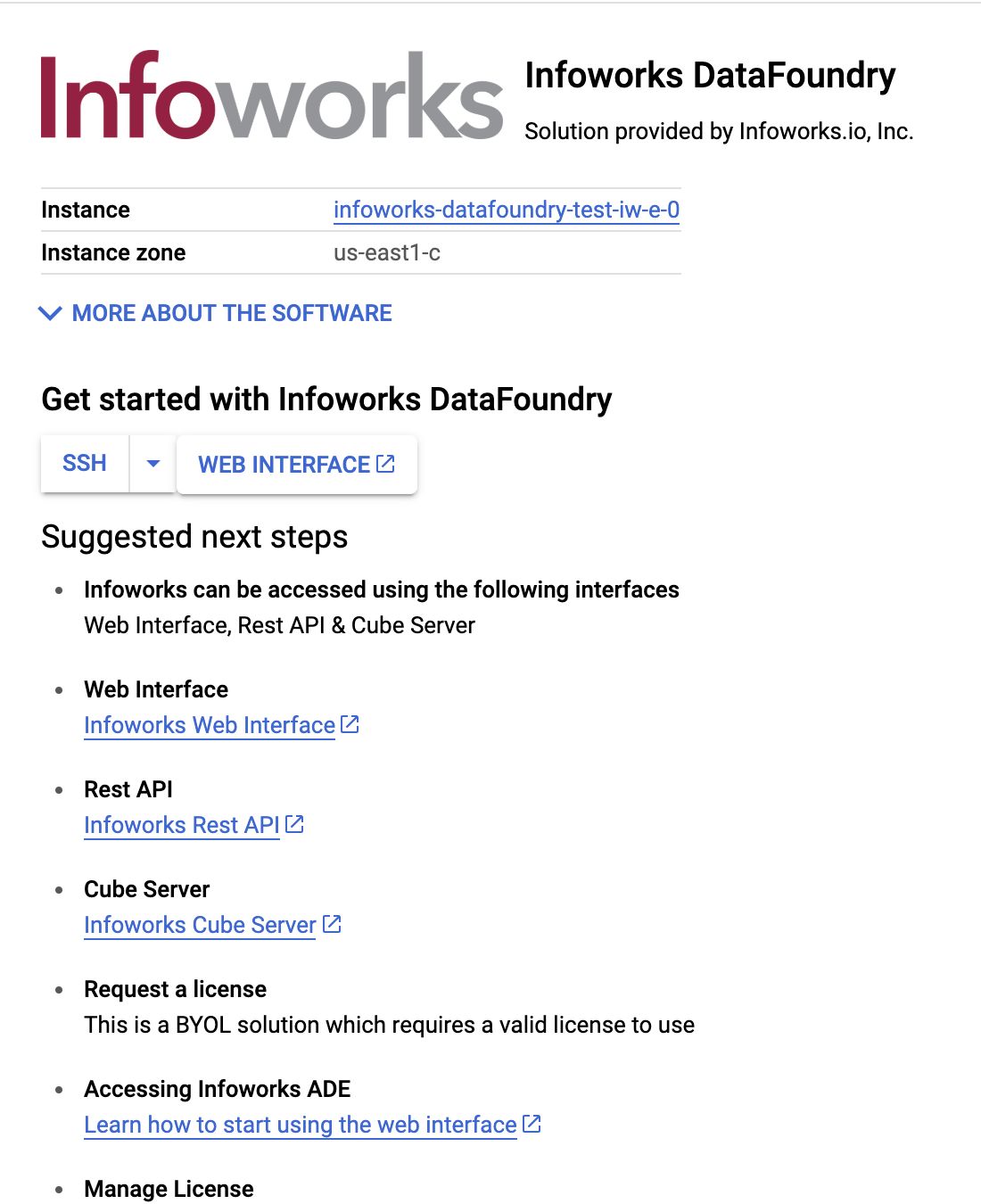

You can select the further steps from this page displayed, which also include the following:

- SSH - allows you to login to the Infoworks server.

- Web Interface - allows you to access the Infoworks DataFoundry via the web interface.

Accessing Infoworks DataFoundry

On the web interface, enter the login credentials and click Sign In. The email ID is admin@infoworks.io and password can be obtained by writing to cloud@infoworks.io.

The Infoworks dashboard will be displayed on successful login. It is recommended to change the password as per the instructions below.

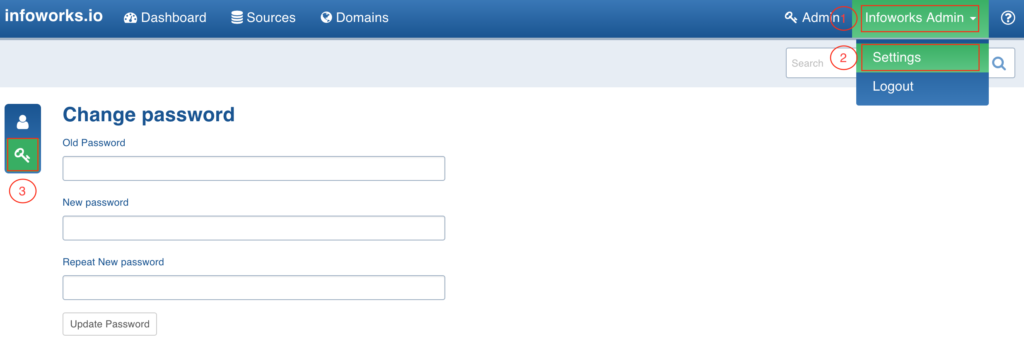

Changing Password

- In the Infoworks DataFoundry, click Infoworks Admin > Settings and click the Change Password icon.

- Enter the old and new passwords and click Update Password. The password will be updated.

License Key

To get started with Infoworks DataFoundry on the Google Cloud platform, you will need a license key. Login to the Infoworks DataFoundry and navigate to Admin > License manager.

For instructions, see the License Management document and write to cloud@infoworks.io for the license key.

Configuration

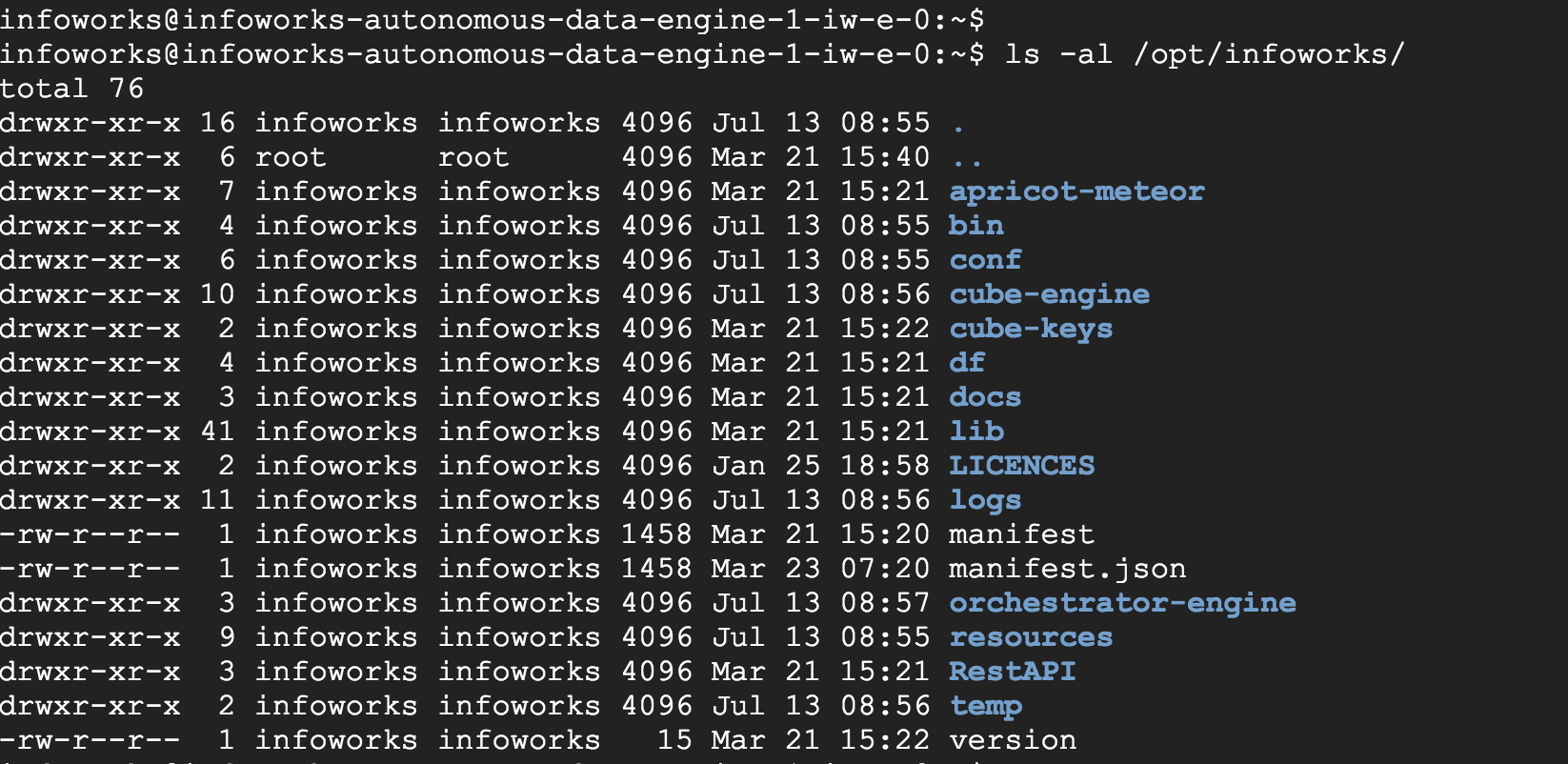

For advanced understanding of the system, view the settings in the configuration files as per the following instructions:

- Click the SSH option.

The command prompt of the Infoworks server will be displayed.

- The default Unix user for Infoworks is infoworks.

- Switch to this user using the command: sudo su – infoworks

- Navigate to the Infoworks configuration directory using the command: cd /opt/infoworks/conf

- The configurations files are located in this folder. The basic configurations are included in the conf.properties file.

- NOTE: It is recommended to add/overwrite a configuration parameter using Infoworks Web Interface only (Admin > Configuration).

Accessing REST API and Cube Server

Click the Infoworks REST API and Infoworks Cube Server links to access the REST API and cube server respectively.

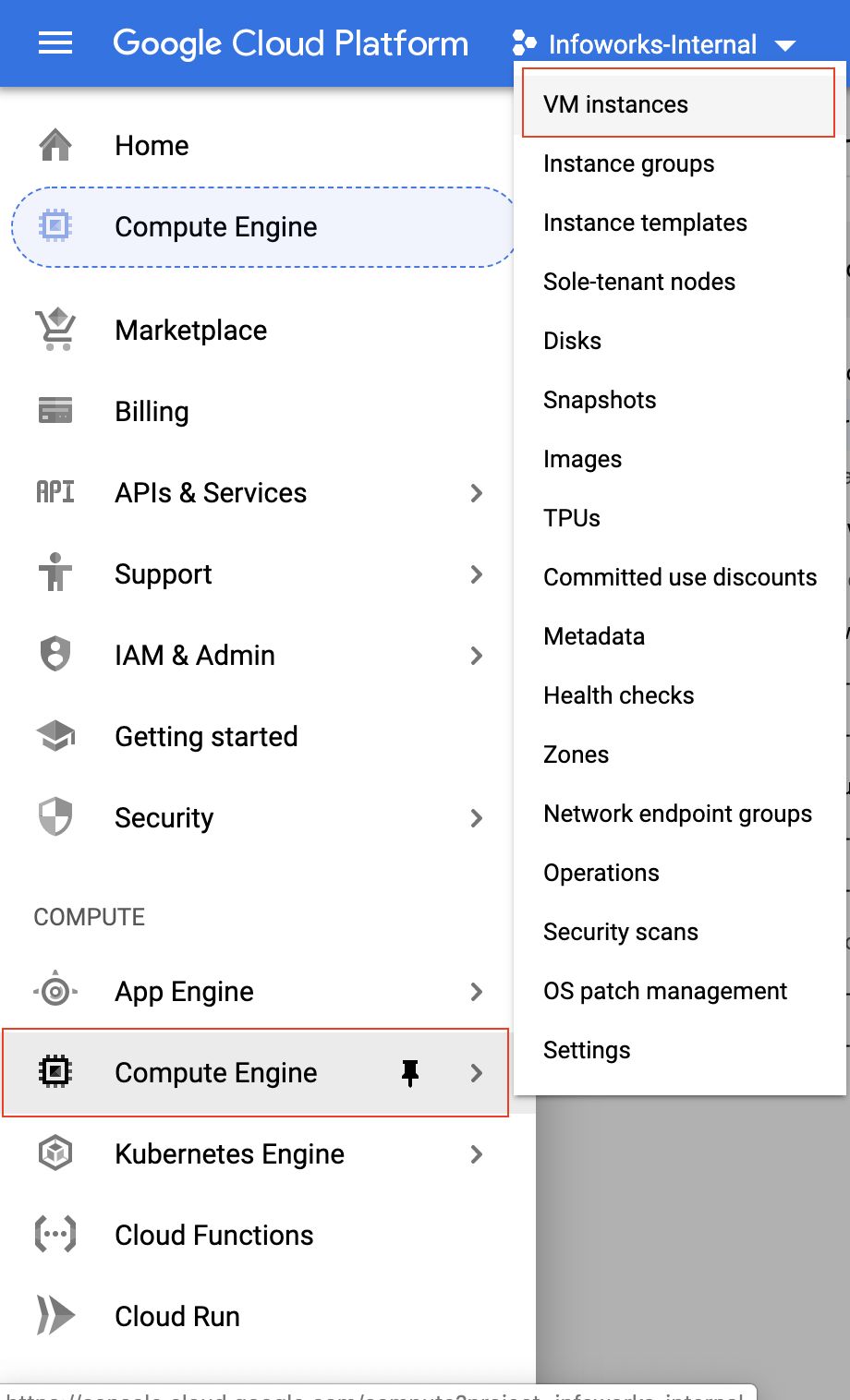

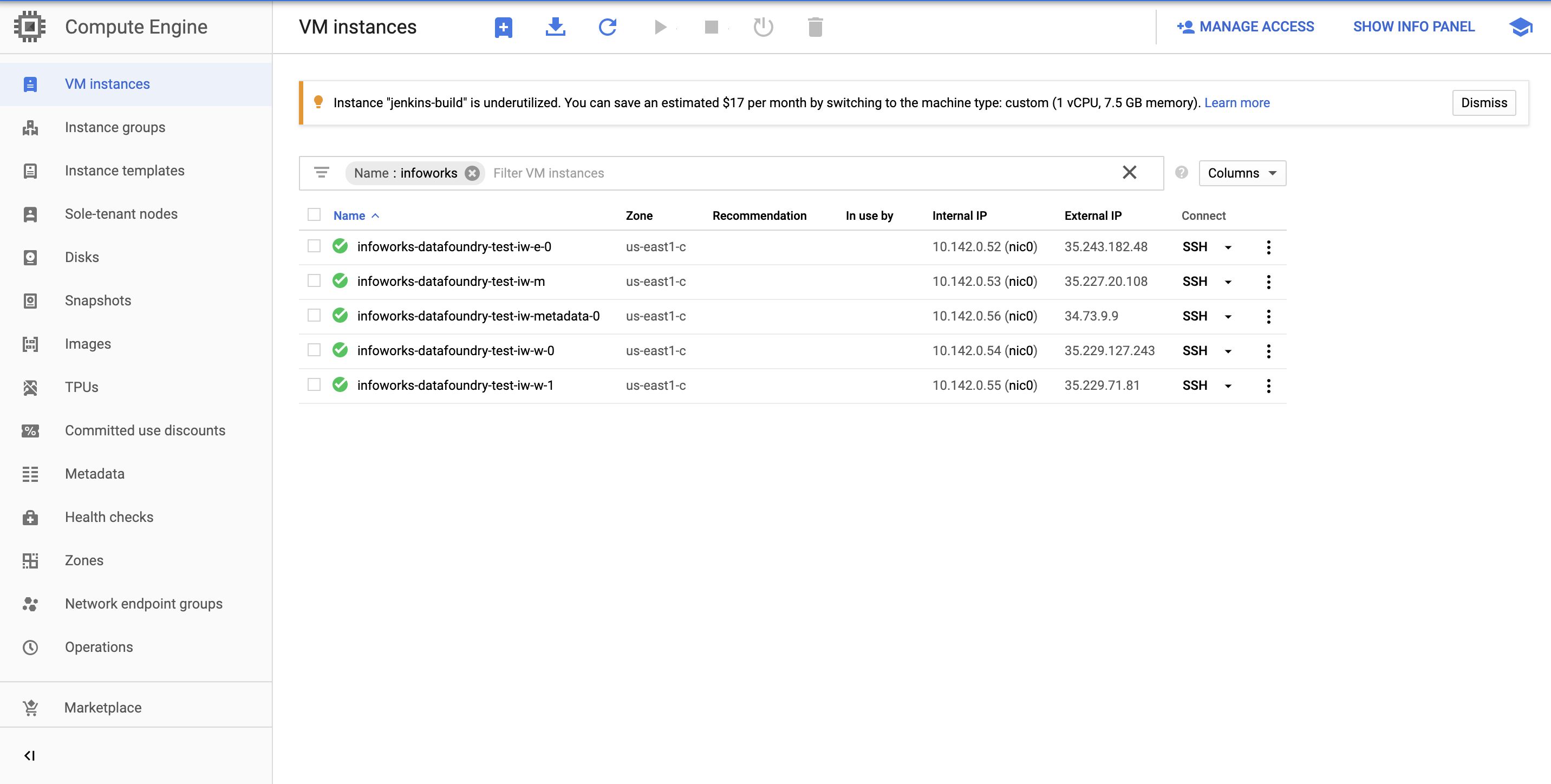

Viewing VM Instances

Login to Google Cloud Console. Click the icon on the top left and click Compute Engine > VM Instances

The list of VM instances in the project are displayed including the instances used by Infoworks.

Scaling the Dataproc Cluster

Following are the instructions to scale up or down the Dataproc cluster:

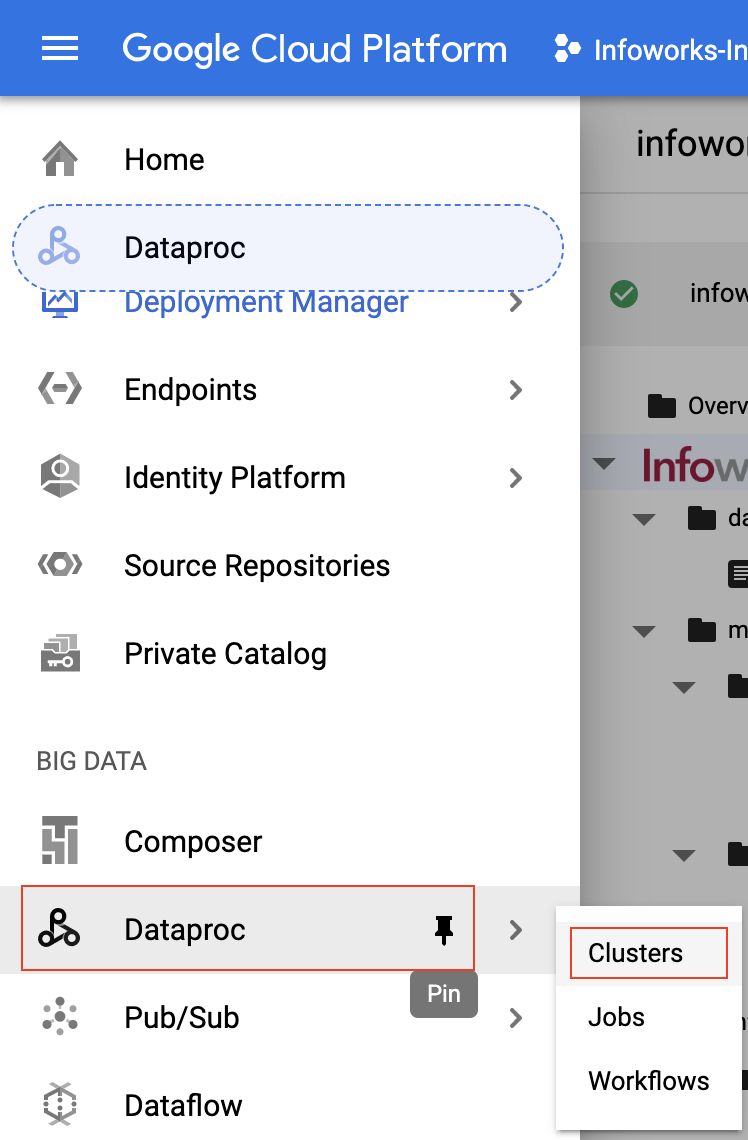

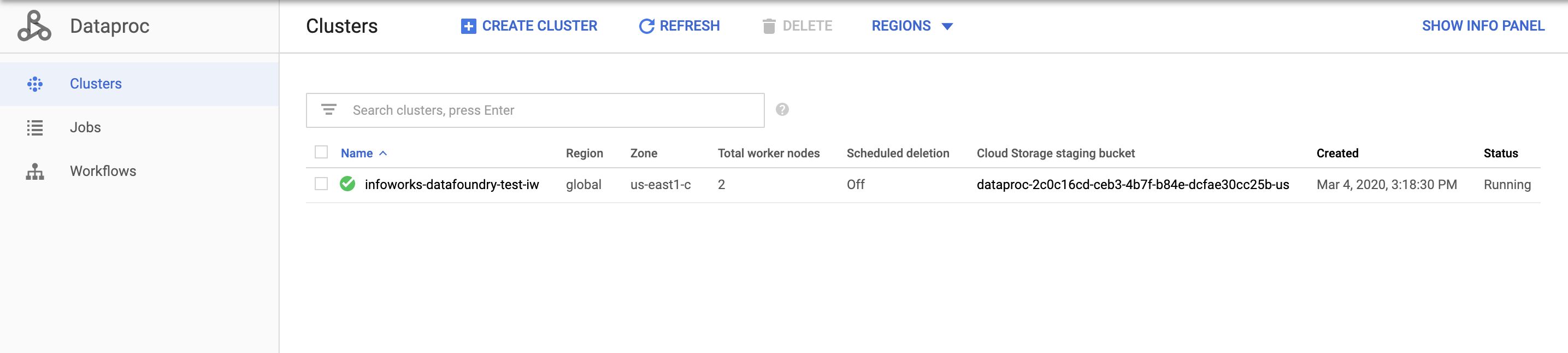

- Click the icon on the top left and click Dataproc > Clusters.

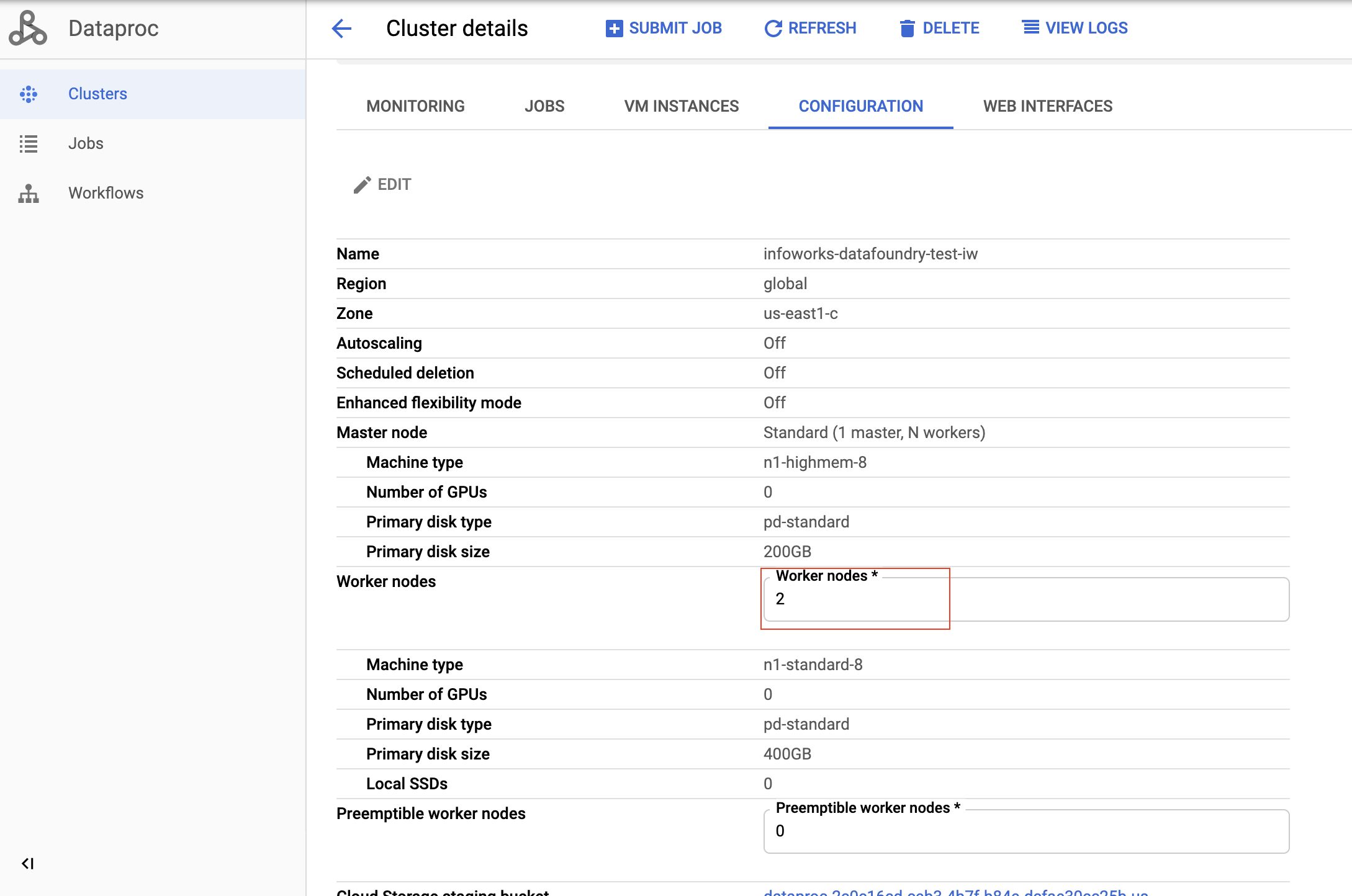

- Click the specific cluster you want to scale.

- Click the Configuration tab and click the Edit button.

- Scale the worker nodes up or down.

- Click Save. The worker node is updated.

Metadata Server Access Credentials

The Infoworks Metadata server is hosted on a dedicated VM instance. It hosts a MongoDB, which uses two users: admin and infoworks.

Write to cloud@infoworks.io to obtain the default passwords of these users.

Getting Help

You can contact Infoworks support for any queries at support@infoworks.io

For more details, refer to our Knowledge Base and Best Practices!

For help, contact our support team!

(C) 2015-2022 Infoworks.io, Inc. and Confidential