Title

Create new category

Edit page index title

Edit category

Edit link

Creating Pipeline

Infoworks Data Transformation is used to transform data ingested by Infoworks DataFoundry for various purposes like consumption by analytics tools, pipelines, Infoworks Cube builder, export to other systems, etc.

For details on creating a domain, see Domain Management.

Following are the steps to add a new pipeline to the domain:

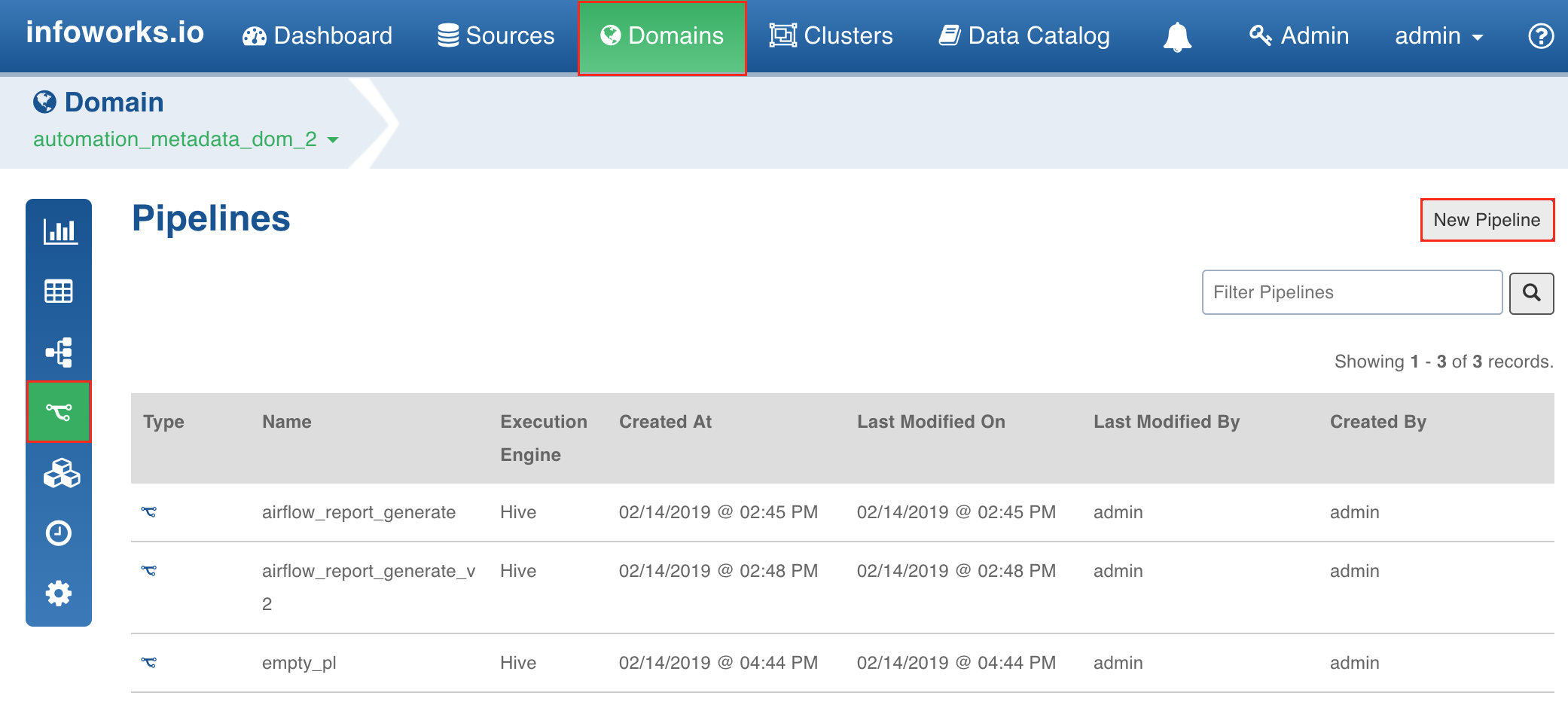

- Click the Domains menu and click the required domain from the list. You can also search for the required domain.

- In the Summary page, click the Pipelines icon.

- In the Pipelines page, click the New Pipeline button.

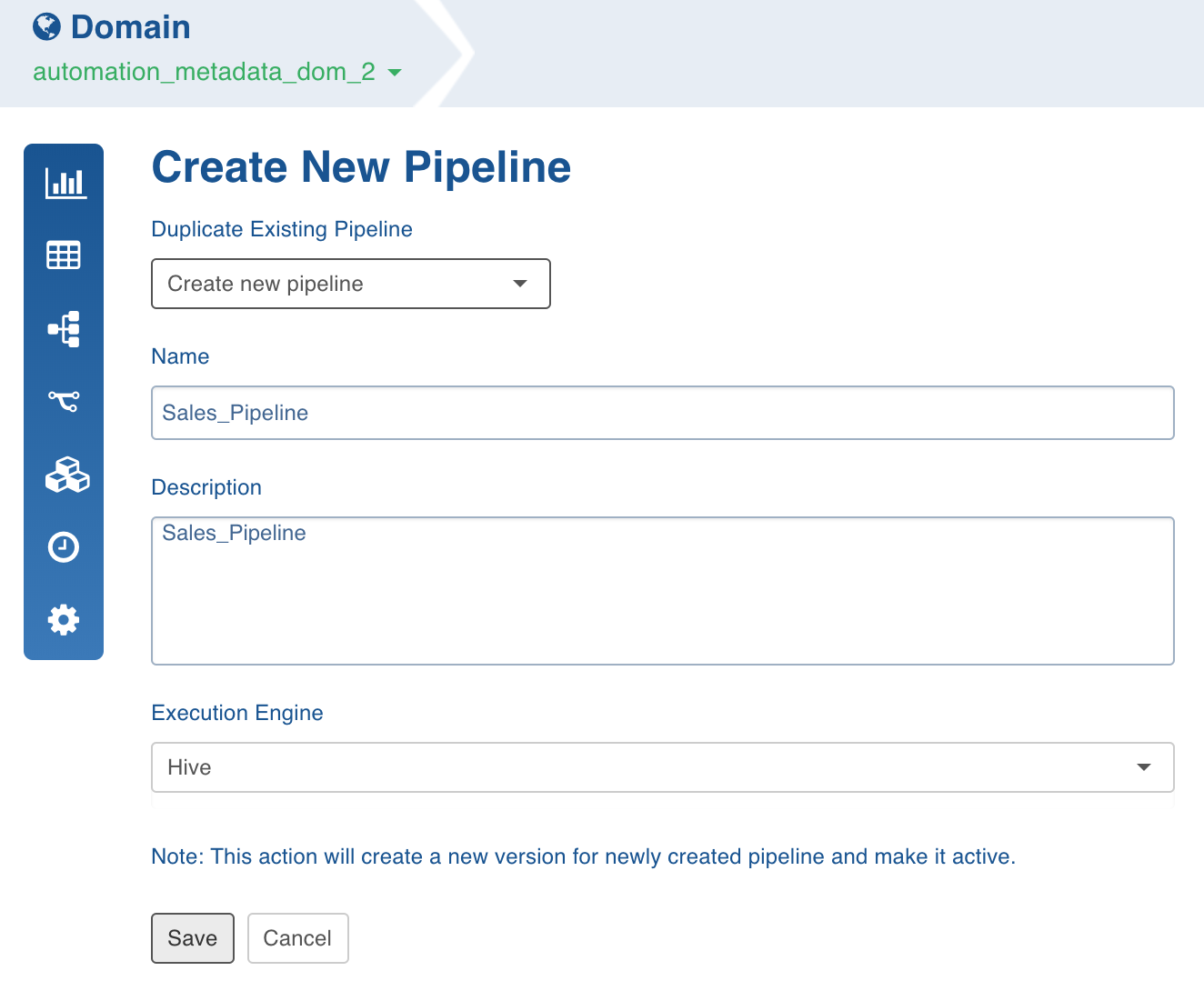

- In the New Pipeline page, select Create new pipeline.

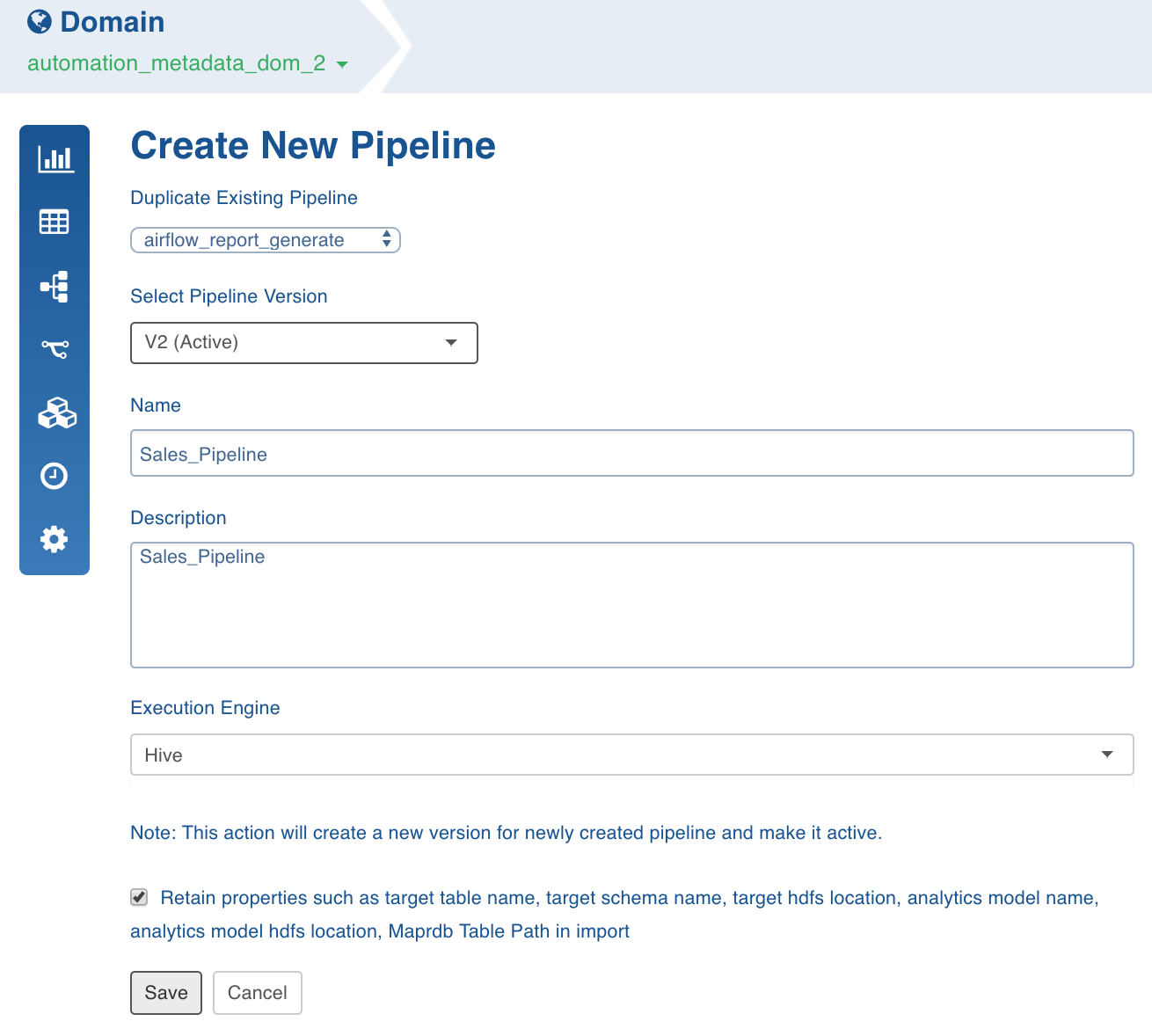

To duplicate an existing pipeline, select the pipeline from the Duplicate Existing Pipeline drop-down list and enable the checkbox to retain properties such as target table name, target schema name, target HDFS location, analytics model name, analytics model HDFS location, MapR-DB table path in import.

- Enter the Name and Description.

- Select the Execution Engine type. The Execution Engines supported are Hive, Spark and Impala.

- Click Save. The new pipeline will be added to the list of pipelines in the Pipelines page.

Using Spark: Currently v2.0 and higher versions of Spark are supported. Spark as execution engine uses the Hive metastore to store metadata of tables. All the nodes supported by Hive and Impala are supported by spark engine.

Known Limitations of Spark

- Parquet has issues with decimal type. This will affect pipeline targets that include decimal type. Recommend to cast any decimal type to double when using in a pipeline target

- The number of tasks for reduce phase can be tuned by using sql.shuffle.partitions setting. This setting controls the number of files and can be tuned per pipeline with df_batch_sparkapp_settings in UI.

- Column names with spaces are not supported in Spark v2.2 but supported in v2.0. For example, column name as ID Number is not supported in spark v2.2.

Submitting Spark Pipelines

Spark pipeline can be configured to run in client or cluster mode during pipeline creation. In client mode, the Spark pipeline job runs on edge node and in cluster mode on yarn node.

If yarn cluster is kerberos enabled, set the following configurations in ${IW_HOME}/conf/conf.properties:

- iw_security_kerberos_enabled=true

- iw_security_kerberos_default_principal=(Infoworks user prinicipal)

- iw_security_kerberos_default_keytab_file=(Infoworks user keytab)

Following are the steps to configure a pipeline in cluster mode:

- Add the following key-value in pipeline advance configuration: job_dispatcher_type=spark

Set the following configuration in the ${df_spark_configfile_batch} file:

- spark.hadoop.hive.metastore.uris=(Hive metastore uris)

- spark.hadoop.hive.metastore.sasl.enabled=true if kerberos enabled

- spark.hadoop.hive.metastore.kerberos.principal=(Hive metastore principal)

- spark.hadoop.hive.metastore.kerberos.keytab.file=(Hive metastore key tab file)

- Create IW_HOME directory on HDFS and copy the ${ IW CONF} directory from local to IW_HOME on HDFS and set spark.driver.extraJavaOptions=-DIW_HOM E =(HDFS IW_HOME path).

Remove Hive jars from df_batch_classpath in ${IW_HOME}/conf/conf.properties.

When running in cluster mode, the pipeline job uploads lib jars on HDFS. By default, the same HDFS path and local path is used while uploading jars from local. For example, if jar path on local is file:/opt/info/lib/df/*, the path, hdfs:/opt/info/lib/df/*, will be created on HDFS and jars from file:/opt/info/lib/df/* will be uploaded to hdfs:/opt/info/lib/df/*. To change the base HDFS lib path, add the following configuration in the ${IW_HOME}/conf/conf. properties file on the edge node: df_hdfs_lib_base_path=(HDFS lib base path).

Spark 2.1 does not allow having same jar name multiple times, even in different paths. If an error occurs, set df_classpath_include_unique_jars=true in ${IW_HOME}/conf/conf.properties.

Submitting Spark Pipeline through Livy

Spark pipeline jobs can also be submitted via Livy.

Following are the steps to submit Spark pipeline job via Livy:

- Add the following key-value in pipeline advance configuration: job_dispatcher_type=livy

- Create a livy.properties file.

- In the $IW_HOME/conf/conf.properties file, set df_livy_configfile=(absolute path of livy.properties file).

Set the following configurations in livy.property: livy.url="https://<livy host>:<livy port>

If Livy is configured to run in yarn-cluster mode, create IW_HOME directory on HDFS and copy the ${ IW_CONF} directory from local to IW_HOME on HDFS and set spark.driver.extraJavaO ptions=-DIW_HOME =(HDFS IW_HOME path) in livy.property.

If Livy is Kerberos enabled, set the following configurations:

- livy.client.http.spnego.enable=true

- livy.client.http.auth.login.config=(Livy client jaas file location)

- livy.client.http.krb5.conf=(krb5 conf file)

You can also add other optional Livy client configurations. For more information, see here.

By default, Spark pipelines are submitted in the existing Livy session. If a Livy session is not available, a new session is created and pipelines are submitted in the new session. Spark pipeline can not share a Livy session created by any other process; if Livy sessions are shared among other processes, set livy_use_existing_session=false in livy.properties.

A Livy client Jass file must include the following entries:

xxxxxxxxxxClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="<user keytab>" storeKey=true useTicketCache=false doNotPrompt=true debug=true principal="<user principal>" ;};NOTE: Infoworks Data Transformation is compatible with livy-0.5.0-incubating and other Livy 0.5 compatible versions.

Using H2O as Machine Learning Engine

To use H2O as machine learning engine, perform the following:

- Download the H2O jar here, based on your current spark version.

- Navigate to the /opt/infoworks/conf/conf.properties file.

- Add the H2O jar path to df_batch_classpath and df_tomcat_classpath.

Best Practices

For best practices, see General Guidelines on Data Pipelines.

For more details, refer to our Knowledge Base and Best Practices!

For help, contact our support team!

(C) 2015-2022 Infoworks.io, Inc. and Confidential