Title

Create new category

Edit page index title

Edit category

Edit link

Advanced Ingestion Functionalities

Infoworks includes the following ingestion functionalities:

- ORC/Parquet Support

- Handling NULL Values

- Boundary Query

- Schema Synchronization Support

- Table Configuration Automation

- Continuous Merge

ORC/Parquet Support

NOTE: ORC and Parquet are the storage formats in which the ingested source file gets stored.

In Schedule Segmented Load, since the storage format selected is Parquet, the timestamp_inserts_updates storage format is configured as PARQUET.

During full ingestion of tables, the tables get stored in the ORC or Parquet formats as per the selection made during source configuration.

Handling NULL Values

This section describes the process and impact of ingesting records having NULL values in REC_ADD_TS (REC_ADD_DT) and REC_CHG_TS(REC_CHG_DT) columns for TIMESTAMP(DATE) based incremental tables during historical data ingestion (full ingestion).

This feature impacts the Ingestion and Data Transformation as follows:

- Full Ingestion: During full ingestion, the records having NULL values in REC_ADD_TS (REC_ADD_DT) and REC_CHG_TS(REC_CHG_DT) columns for TIMESTAMP(DATE) based incremental tables will be fetched from the database. For these records, ZIW_SOURCE_START_DATE and ZIW_SOURCE_START_TIMESTAMP values will be NULL in the target tables. If either of the timestamp columns or date columns is used in SPLIT BY configuration, the records with the NULL values will not be fetched during ingestion.

- Incremental Ingestion: During the incremental ingestion, records having NULL values for both the columns will not be fetched from the database. If only one value is NULL, the record will be fetched from the database. For these records, ZIW_SOURCE_START_DATE and ZIW_SOURCE_START_TIMESTAMP values will be NULL in the target tables.

- Data Transformation: There is no impact because of NULL values in the downstream. Data Transformation uses only ZIW_TARGET_START_TIMESTAMP. Export functionality will be impacted only if all the values of ZIW_SOURCE_START_TIMESTAMP are NULL, which is unlikely.

Set the following admin configurations to enable or disable this feature:

- fetch_null_timestamped_records=true: Records having NULL values will be fetched.

- fetch_null_timestamped_records=false: Records having NULL values will be ignored during ingestion.

Boundary Query

NOTE: Boundary Query is used to determine splits for ingestion jobs pulling data from RDBMS sources. If the split-by column split values are known in advance, for example, monthInYear (1-12), dayInMonth(1-31). Users can save processing time by specifying the query upfront, for example, select 1,12 from dual.

The Boundary Query checkbox is available when you select split by from Infoworks DF.

Once you check Enable Boundary Query, a text box to enter your custom query will be displayed.

Schema Synchronization Support

This section describes how Infoworks handles schema synchronization in Ingestion for:

- Addition of columns

- Deletion of columns

- Modification of columns

This section also provides specific instructions that you must perform when automatic schema synchronization is not supported.

Addition of Columns

If schema synchronization is enabled for a table, Infoworks ingestion process automatically fetches the new column(s) and merges them with existing data. New column(s) are applied to the existing data on Hadoop for both the current view and historic view tables.

In this process, the new column(s) in the backfilled records will be populated with one of the following:

- Default values of the column(s), if there is a default value.

- NULL, if you do not want to backfill with default values.

Once the schema patch is applied, the regular incremental ingestion process continues to fetch and merge delta. If the configuration of schema synchronization is not enabled, Infoworks process ignores the column and continues with incremental ingestion.

NOTE: For Oracle log-based CDC, the database administrator must ensure to update the dictionary.

Deletion of Columns

Infoworks detects the column deletion and notifies you to reset the table in summary logs.

NOTE: You must truncate reload the table.

Modification of Columns

Infoworks detects the column modification and notifies you to reset the table in summary logs.

NOTE: You must truncate reload the table.

Table Configuration Automation

NOTE: Infoworks DF includes many table configurations that you must set before starting any ingestion. Though many of these are not mandatory in full ingestion tables, the objective of this feature is to suggest you the recommended values from the database metadata. You can always change the configurations.

Infoworks DF suggests you a set of configurations by auto-filling them. Infoworks metadata crawl analyzes the table columns and auto-fills for the following columns:

- Natural Key: Value for this configuration is fetched from the database metadata. If the source table has natural key defined on the schema, it will be automatically configured.

- Split By: During metadata crawl, if the primary key column has a numeric field defined on the source table, it will be configured automatically.

- Partition Hive Table on: Partitioning hive table is the way of creating indexing on hive. Metadata crawl fetches the indexing columns from the source table, other than primary indexes, and configures them.

NOTE: This feature is applicable for only RDBMS metadata crawls.

Continuous Merge

Infoworks supports continuous merging of delta records with the base tables in the Hadoop or Cloud cluster. This allows you to continually ingest changed data at low latencies from sources, while still making the base and refreshed data available for downstream access.

Infoworks maintains additional tables for every synchronized Hive table that has been created by Infoworks ingestion. So for every such Hive table called TableA in the data lake, a view is created in the same Hive schema called realtime_TableA that contains the most fresh data from the incremental ingestion process. This realtime table contains a combination of the CDC delta records and the merged data. This table allows you to access the merged data before the actual merge occurred. The view query merges the CDC-data and the full-data while the user is reading it.

TableA, therefore, contains the last merged data and is available for downstream applications that have a high read-performance requirement, or does not have stringent SLA on data freshness.

Realtime_TableA contains the latest data from the CDC and is suitable for applications that have a stringent SLA or need to access changed data without waiting for a merge process to complete.

Locking

NOTE: The merged data does not reflect into the TableA until a switch is performed. A switch is a process that replaces the old secondary partition folders with new secondary partition folders with the merged and updated CDC data.

For a table, switch will be performed as follows:

- A lock is taken on the primary partition of the table (if table is not primary partitioned, the lock is taken on the whole table).

- All the secondary partitions for this primary partition is switched.

- The lock is released.

- The corresponding CDC data is deleted from the CDC folder.

- The table X and the view X_realtime must give same results for all queries. If you click the Ingest Now button, CDC merge occurs followed by a switch.

Near-Realtime Use Cases

If the use case is to always access the data in near-realtime, follow the following approach:

Near-realtime use cases requires table to be updated with the latest data within minutes of the source update and requires the table to be available for querying most of the time. The table is locked or unsuitable (when locking is OFF) for querying when the table is being switched after the merge and user queries might be blocked or fail. For such cases, you must query the realtime view which is available with the latest data while CDC and merge are running on the table. The actual table will also be available during CDC and merges but the data will be updated in the actual table only after a switch.

For example, if merge and switch are scheduled for every midnight and CDC occurs every 15 minutes, then the realtime table will have the new data every 15 minutes while, the actual table update will be available at midnight. This strategy is useful when the merge takes more than few minutes and the table needs to be available for continuous query throughout the day.

Hence, you can either run/schedule the ingest now job which handles the CDC, merge and switch consecutively, or, you can schedule the CDC, merge and switch jobs independent of each other.

NOTE: The actual table does not show the new data until a switch has been executed on the table.

By default, locking is OFF. To turn locking ON for a table, ensure that locking is enabled in the hive and set the MERGE_LOCKING_ON configuration key to true (default is false). This configuration is available on the table, source, and admin level.

Rest API Call for Switch Job

[POST] :/v1.1/source/table_group/ingest.json?``table_group_id=xxxxx&ingestion_type=switch&auth_token=xxxx

Infoworks CLI command for Switch Job

/path/to/cli-script ingest --source --group --ingest-type switch --username --password

NOTE: Continuous Merge and realtime views are only applicable for incremental tables.

Secure Data Transmission

In this form of Transport Layer Security, a one-way authentication is performed. This means that the database server has to authenticate itself to the client (Infoworks) during the handshake. This is achieved by placing the server certificate in the client truststore on the edge nodes and the data nodes.

Currently we support secure data transmission (network encryption with server validation) for the databases mentioned below. By default, this is the java truststore, cacerts. The JDBC URL for each of these databases must be modified with some additional parameters.

NOTE: By default, the server certificates must be placed in the default JVM truststore, cacerts, of the edge nodes and data nodes. If you are not using the default configuration for Oracle and Sybase, and to customize the truststore paths and store the certificates there, see the following Custom TrustStores for Oracle and Sybase section.

| Database | JDBC URL | Extra Parameters |

|---|---|---|

| MySQL | jdbc:mysql://127.0.0.1:3306/SAMPLE?verifyServerCertificate=true& useSSL=true&requireSSL=true | verifyServerCertificate Parameter to true for production and CA certificate. |

| MariaDB | jdbc:mariadb://localhost/SAMPLE?useSSL=true& trustServerCertificate=true | -trustServerCertificate is false for CA certificate |

| SQL Server | jdbc:sqlserver://localhost:1433;databaseName=SAMPLE; encrypt=true;trustServerCertificate=true | -trustServerCertificate is false for CA certificate |

| Teradata | jdbc:teradata://localhost/TMODE=ANSI,database=SAMPLE, ENCRYPTDATA=ON | |

| Netezza | jdbc:netezza://hostname:5480/sampleDB;securityLevel=onlySecured | security levels: onlySecured, preferredSecured |

| Oracle | jdbc:oracle:thin:@(DESCRIPTION=(ADDRESSLIST= (ADDRESS=(PROTOCOL=tcps)(HOST=hostname)(PORT=2484))) (CONNECTDATA=(SERVICE_NAME=servicename))) | Port number 2484 and TCPS Protocol preferred for Encryption - Uses JVM Default truststore |

| SAP Hana | jdbc:sap://hostname:39013/?encrypt=true&validateCertificate=true | Port number should be added as per the instance trustStore, trustStoreType and trustStorePassword parameters to be set in URL if JVM default truststore is not used. |

| DB2 | jdbc:db2://hostname:50001/SAMPLE:user=user-id;password=password;sslConnection=true; | NA |

Custom TrustStores for Oracle and Sybase

The following customizations are available for the TLS settings for Oracle and Sybase:

Source Level Configurations

- ENABLE_RDBMS_IMPORT_TLS (boolean): The default value is false. This value is set to proceed with custom truststore configurations, viewing logs, etc.

NOTE: Do not set this configuration if the default truststore and password is to be used.

- Enable_encryption_logs (boolean): The default value is false. Setting this value to true prints ssl debug logs.

- ingestion_truststore_path: The path of the truststore on the edgenode.

- ingestion_truststore_password: The password of the custom truststore on the edgenode.

- sqoop_truststore_path: The path of the truststore on the datanode.

- sqoop_truststore_password: The password of the truststore on the datanode.

NOTE: Ensure that the path and password to the truststore is same on all data nodes.

Data Masking

The data masking feature for RDBMS sources allows you to opt for masking certain columns of the table during ingestion. This masking is server-side, which means the data brought into the Infoworks node will already be masked. This feature helps in storing sensitive data that must not be stored in the data lake in its true form.

Following are the steps to enable data masking for a table:

- Navigate to the Metadata section of the specified table on the Tables page and click Edit Masking.

- Select the Mask-Column checkbox to mask the particular column.

Masking Types

Currently, Infoworks supports only the following types of data masking for RDBMS sources:

- Fixed Data Masking: The data in the column will be entirely replaced by the value in the Replace Pattern box and stored in the data lake. The target type in data lake will be String. Following are the data bases supported: Oracle, Teradata, SQL Server, DB2, MariaDB, Mysql, Sybase, SAP Hana, Redshift and Vertica.

- Regex-Based Data Masking: The data in the column will be replaced based on the match made by the regular expression in the Find Pattern text box. The regular expression format is database specific (POSIX for most sources). The target type in data lake will be String. Following are the data bases supported: Oracle, Teradata, Db2, Mysql and MariaDB.

NOTE: Currently, this type is only supported for Oracle, Teradata and MariaDB (v 10.5 upwards).

Find Pattern: For Regex-Based Masking, the Find Pattern field specifies the regular expression in the POSIX format, which will be matched in the column. Replace Pattern: The value in the Replace Pattern field will replace the matching regular expression (in case of Regex-Based) or the entire column (in case of Fixed Data Masking).

NOTE: The columns selected as masked columns cannot be used for any other purpose like, natural key, split-by keys, CDC keys, etc, and vice-versa. This is disabled by Infoworks.

Workload Management

The primary goal of workload management is to control the load (number of connections to the database) on source database while ingestion occurs. We can ingest data to Infoworks either by creating a table group or loading a table in segments (the latter one is useful for bigger tables).

This section covers workload management for both table group ingestion and segmented load ingestion while describing how we control number of connections to source.

Table Group Ingestion

Following are the steps to configure and ingest a table group:

- Click Sources on the main menu. The page with the list of sources is displayed.

- Click the required source for which the table group is to be configured. The page with the list of tables included in that source is displayed.

- Click Table Groups. The following is displayed.

- Click Add Table Group. The Add Table Group page is displayed.

Following table provides descriptions for all the fields in the Add Table Group page.

| Sl.No | Config Type | Config Name | Description |

|---|---|---|---|

| 1 | Table-group | Table Group Name | Name of the table group. |

| 2 | Table-group | Max. connections to source | Maximum number of source database connections allocated to this table-group. |

| 3 | Table-group | Max. Parallel Tables | Maximum number of tables that can be crawled at a given instance. |

| 4 | Table-group | Yarn Queue Name (optional) | Name of the yarn queue for ingestion and export jobs. |

| 5 | Table-group table | % Connection Quota | Percentage of "Max. connections to source" that a table is allocated with. |

- Enter the required values on all the fields and click Add Tables. The Add Tables page with the list of tables present in the source is displayed.

- Select the tables to be added and click Add Tables at the bottom of the page. The selected tables will be added.

If you want to remove any table from the table group, click Remove Tables. The following dialogue box containing the list of tables that can be removed is displayed.![] Select the tables that are not required and click Remove Tables.

NOTE: You must add at least one table for creating a table group.

- Assign connection quota to each table. Alternatively, you can click Auto allocate to assign the connection quota automatically based on the connections assigned to the table group.

- If required, schedule the table group ingestion by selecting options under Schedule Ingestion for Table Group.

- Click Save Configuration.

- Click View Table Group option. The following page is displayed.

- Click Ingest Now to ingest incremental delta from data source or, click Initialize And Ingest Now to truncate reload the table.

NOTE: System runs all the tables in parallel whose Connection Quota sums up to 100 (or close to 100). When one table crawl is complete, based on the available connections, system picks the next set of tables and ingests them.

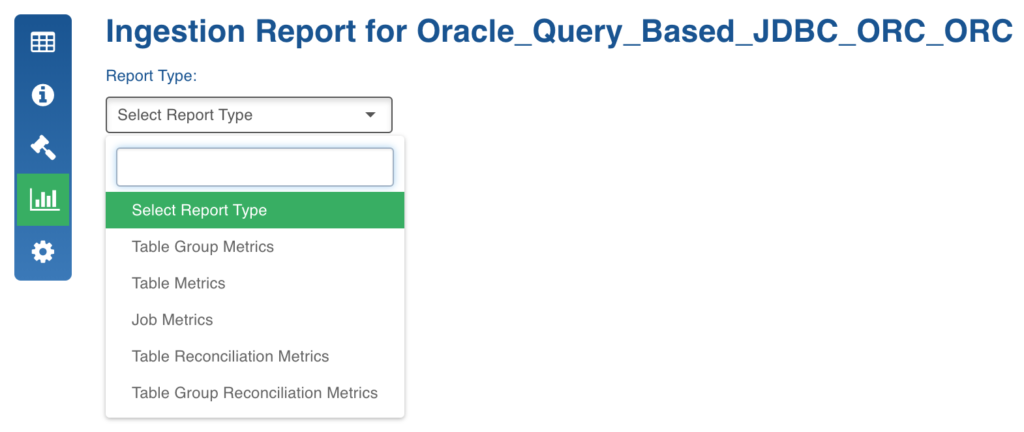

Generating Ingestion Report

The Ingestion Report feature generates ingestion-related metrics at the table, table group, and job levels.

Following are the steps to generate the ingestion reports:

In the Source Configuration page, click the Ingestion Reports icon. The Select Report Type drop-down displays the options.

- Table Group Metrics

This report displays aggregated ingestion metrics at table group and table level. The grey rows indicate table groups and white rows indicate the tables in the table groups. You can filter records using the Submitted After, Submitted Before and Table Groups options. You can export this report using the Export to CSV/Excel option.

- Table Metrics

This report shows ingestion metrics of jobs for a table. You can filter records using the Submitted After, Submitted Before options and select a table for records. You can also export this report using the Export to CSV/Excel option.

- Job Metrics

This report shows ingestion metrics of jobs for current source. You can filter records using the Submitted After, Submitted Before, Status, Job IDs and Job type options. You can also export this report using the Export to CSV/Excel option.

- Table Reconciliation Metrics

For details, see the Table Reconciliation Metrics section.

- Table Group Reconciliation Metrics

For details, see the Table Group Reconciliation Metrics section.

Table Groups Options

After ingesting a table group, you can click the Table Groups tab in the Source Configuration page and view the ingested table groups.

This section describes the icons in the Table Groups page.

- Configuration Audits When you click the Configuration Audits icon, the audit logs for the table group is displayed.

When you click show modifications links, the details of the modifications performed on the table group is displayed.

View Table Group

When you click the View Table Group icon, the table group details are displayed. It includes the following options:

- Generate Sample Data Now: Generates the sample data.

- Export Tables: Exports all the tables in a table group.

- Ingest Now: Ingests incremental delta from data source.

- Initialize And Ingest Now: Truncates and reloads the existing table, if any.

- Configure Table Group: Option to modify the table group configuration.

- Delete Table Group Data: Option to drop the tables in the target (hive) for the corresponding tables in the table group (the table group will still exist).

- Delete Table Group: Deletes only the table group and its corresponding configurations from the metadata store.

- Maintenance Options: This option is described in detail in the section below.

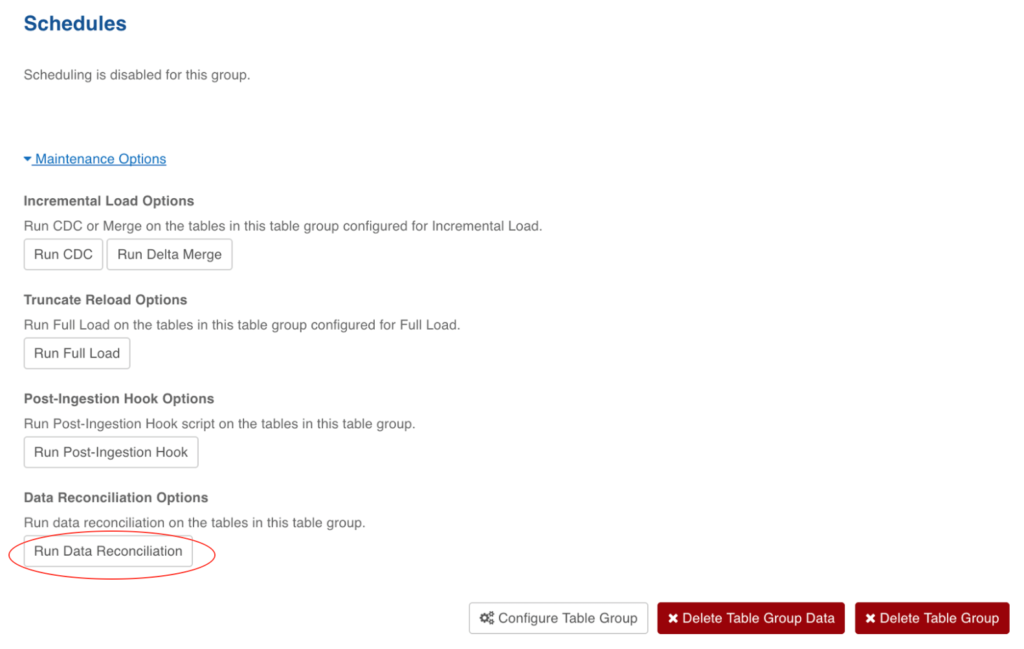

Data Ingestion Maintenance Options

Maintenance options in Ingestion provides access to ad-hoc requests from the user. These are available at table group level. Currently, maintenance options are available for any RDBMS JDBC based extractions and Teradata TPT based extractions.

Following are the steps to access the maintenance options at the table group level:

- Navigate to the required source.

- In the Source Configuration page, click Table Groups.

- For the required table group, click the View Table Group icon.

- On the Table Group Configurations page, click Maintenance Options.

Following are the maintenance options:

- Run CDC: Fetches the incremental delta from data source without merging records onto Hadoop.

- Run Delta Merge: Merges available incremental delta files to the already existing data on Hadoop.

- Run CDC and Run Delta Merge work like Ingest Now.

- Run Full Load: Truncates and reloads the table. It works just like Initialize and Ingest Now.

- Run Post-Ingestion Hook: Normally, Post-Ingestion Hook scripts will be executed as part of the ingestion job (full/incremental). To run the scripts externally, this will provide a UI hook at the table group level.

- Re-Organize Data: This feature allows you to change some of the table-level configurations without having to truncate-reload the table. For more details, see the Data Restructuring and Table Configuration Managementsection.

- Run Data Reconciliation: This feature gives the user a provision to reconcile table data on source and target. For more details, see the**Data Reconciliation section.

Teradata TPT

In case of TPT, the data extraction from source includes the following steps:

- Fetching data from Teradata Using TPT: The data from Teradata will be stored onto Hadoop in CSV format.

- Processing the CSV file: The CSV file data will be processed (and converted) to store in the user-selected format (ORC/Parquet).

Following are the maintenance options:

- Run Delta Stage Job: The incremental delta data from Teradata will be fetched and stored onto Hadoop in CSV format.

- Run Delta Process Job: The delta CSV file data will be processed (and converted) to store in the user-selected format (ORC/Parquet).

- Run Delta Merge: Merges the available incremental delta files to the already existing data on Hadoop.

- Run Full Load: Truncates and reloads the table. It works like Initialize and Ingest Now.

- Run Post-Ingestion Hook: Normally, Post-Ingestion Hook scripts will be executed as part of the ingestion job (full/incremental). To run the scripts externally, this provides a UI hook at the table group level.

- Run Full Load Stage Job: The data from Teradata will be stored onto hadoop in CSV format.

- Run Full Load Process Job: The CSV file data will be processed (and converted) to store in user-selected format (ORC/Parquet).

Post Ingestion Hook

Post hook ingestion is a feature where you can provide bash scripts to be run after ingestion completes. The post hook runs in the same job as the ingestion job. The scripts run after each table ingestion (full load, incremental load, and merge).

The script is invoked when the job is successful and also, when the job fails.

The script should be idempotent, that is, the action performed should not be affected by the number of times the script is called, as the script is called after each ingestion job.

Adding a Script

To add a post ingestion script, follow these steps:

NOTE: To add a script, you must have admin privileges and access to the edge node (the server where infoworks service is running).

- Click Admin on the main menu.

- Click the Post Hook Script icon.

- Click Add Script. The following page opens. Figure: Add Post Hook Script

- Enter the script name, description, and the path where the script is.

NOTE: The script must be executable.

- Click Add Script.

Configuring Source to Run Post Hook

If you want a script to run for all the tables in a source:

- Navigate to the configuration panel of that source.

- Scroll down to the Post-Ingestion Hook section.

- Click Enable Post-Ingestion Script Execution, select the required script and click Save. The post hook is now enabled for all the tables for that source.

Configuring Table to Run Post Hook

To configure a table to run a post hook script, follow these steps:

- Navigate to the specific table and click the configure icon.

- Click Post Hook Ingestion.

- Click Overwrite Post-Ingestion Script Execution checkbox and select the required script.

- Click Save Configuration. The post hook is now configured for the table.

Environment Variables

The environment variables from the ingestion job are passed to the post hook script. In addition to these variables, the following variables, through which the table context for the post hook is available, can also be used.

- IW_TABLE_ID: The tableId of the table for which the script is running.

- IW_HIVE_TABLE_NAME: The name of the table in hive, for the table for which script is running.

- IW_HIVE_SCHEMA: The schema in hive, where the hive table was created

- SOURCE_TABLE_NAME: The name of the table in the source database, from where table was ingested.

- SOURCE_SCHEMA: The schema in the source database, from where the table was ingested.

- IW_TABLE_HDFS_PATH: The HDFS path of the table, where the data is stored.

- IW_SOURCE_ID: The ID of the Infoworks source in which the job is running.

- IW_TABLE_GROUP_ID: The ID for the group for which the job is running.

- IW_TABLE_GROUP_NAME: The name for the group for which the job is running.

- IW_SOURCE_NAME: The name for the source, which has the table.

- IW_JOB_ID: The ID of the running job.

- IW_JOB_TYPE: The job type of the job run. Refer to Ingestion Job Types and Their Descriptions for the possible values.

- IW_JOB_STATUS: The status of the job. The possible values are "successful" and "failed".

Post-Hook Ingestion Known Issue

The script might not run when the job fails due to java heap issue or when the user cancels the job.

Data Reconciliation

Infoworks Data Reconciliation is a process to validate ingested data into Hadoop/Hive, against the data in source systems. Data reconciliation will be supported only for RDBMS databases. This solution is applicable for all types of data synchronization mechanisms (Timestamp based, Query based, Log Based, BatchID based) in RDBMS ingestion.

Prerequisite

Ensure that the table includes a date/timestamp column on which data will be filtered for reconciliation.

NOTE: You can configure reconciliation for tables in RDBMS Sources. Reconciliation configuration can be set at the table configuration page.

Procedure

To perform a data reconciliation, follow these steps:

- Navigate to the Source which contains the table to be reconciled.

- Locate the table and click Configurations icon next to the table.

- Click Modify Configuration. The following page opens. Figure: Source Configuration Page

- Click Data Reconciliation Configuration. The following page opens. Figure: Data Reconciliation Page

- Click Add Query. The following page displays.

The Type drop-down has two options:

- Type: Used to define type of query (Row Count or Aggregation).

- Reconcile On: Used to define the date/timestamp column which will be used for filtering data for reconciliation.

- Reconciliation Interval: Interval for which reconciliation needs to be done. The start and end timestamps of the intervals will be compared against Reconcile On column, which you configured. The supported Reconciliation Intervals are:

- Daily: Reconciliation happens daily. The exact interval of daily can be computed from the configuration of Compute Interval From

- If you select Server Timestamp, then the interval will be computed from the current server timestamp and the reconciliation will be done for the previous day's ingested data.

- If you select Reconciliation Column, then the interval will be computed from the MAX (Reconciliation Column) and the reconciliation will be done for the previous day of MAX ( Reconciliation Column) ingested data.

- Last Month: Reconciliation for all records for the last calendar month.

- Last Week: Reconciliation for all records in the last completed week. Week starts on Sunday and ends on Saturday.

- This Month: Reconciliation for all records in current month. The exact interval of this month can be computed from the configuration of Compute Interval From

- If you select Server Timestamp, then the reconciliation will be done from the starting of the current calendar month till the current server timestamp.

- If you select Reconciliation Column, the reconciliation will be done for the starting of the current calendar month till MAX ( Reconciliation Column) ingested data.

- This Week: Reconciliation for all records in current week. The exact interval of this week can be computed from the configuration of Compute Interval From

- If you select Server Timestamp, then the reconciliation will be done from the starting of the current week till the current server timestamp.

- If you select Reconciliation Column, the reconciliation will be done for the starting of the current week till MAX (Reconciliation Column) ingested data.

- Fixed: Reconciliation for all records in time interval. You must define From Date and To Date

- All Dates: Reconciliation will be done for all the ingested data. In this case, end timestamp will be computed using max (Reconciliation Column) on hive and perform the reconciliation with this value on source and target.

- Aggregation Function: Computes aggregation of data on source and target by configuring the aggregate column and group by

- Aggregation Column: Column on which aggregation function can be applied.

- Group By Column: Columns on which group by clause can be applied in the aggregation query.

- Enable Where: Enable this check box to define a where condition to filter the data on source and target while performing data reconciliation. A sample where condition is displayed in the UI.

WARNING: You must not enter the where construct but only the actual condition.

NOTE: You can add or remove the queries for reconciliation.

- Click Save to save the configuration.

WARNING: Table must have date/timestamp column to configure reconciliations. Else, the error message, Date/Timestamp column is mandatory for table data reconciliation, **is displayed.

If there is a change in table columns on which you have configured reconciliation, you must manually update the configuration from Data Reconciliation Configuration page.

Querying Data Reconciliation Status

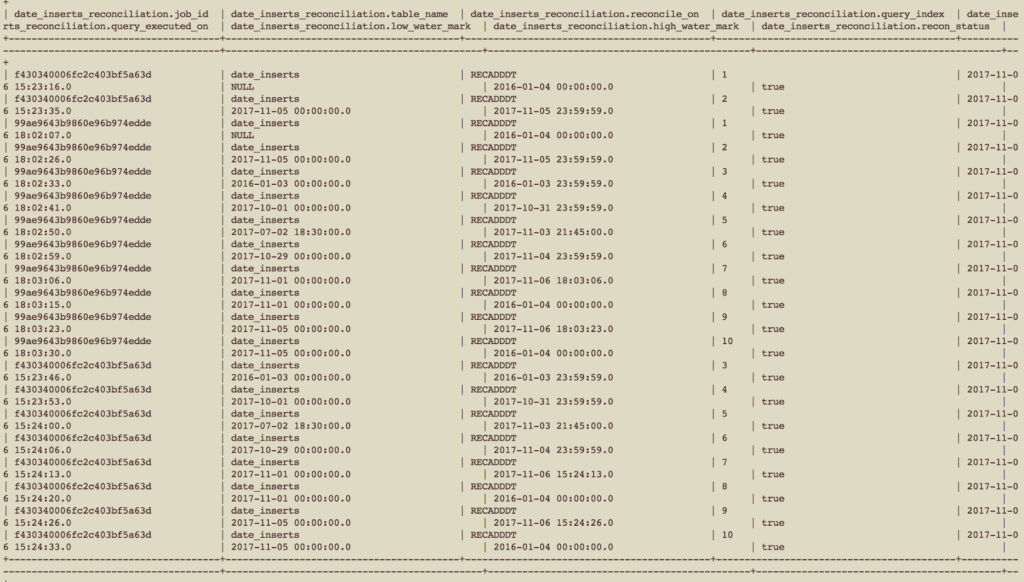

For every reconciliation configured table, after the data reconciliation is done, the reconciliation status is stored in hive table level. It is created in the same schema wherever the actual data table is created. Below is a screenshot for one such table. The table name for the reconciliation status table is <table_name>_reconciliation.

The fields of the table are as described below:

- job_id: The Infoworks job ID which runs the reconciliation. It is a string type column.

- table_name: Name of the table on which reconciliation is done. It is a string type column.

- reconcile_on: Column name on which the data reconciliation is done for this table. It is a string type column.

- query_index: Serial number of the query that you have defined in the reconciliation configuration. It is an int type column.

- query_executed_on: At what time of the server time that the query is executed. It is a timestamp type column.

- low_water_mark: The start timestamp for the reconciliation query. It is a timestamp type column.

- high_water_mark: The end timestamp for the reconciliation query. It is a timestamp type column.

- recon_status: Whether the reconciliation status is successful or not. It is a boolean type column.

Triggering Reconciliation Job

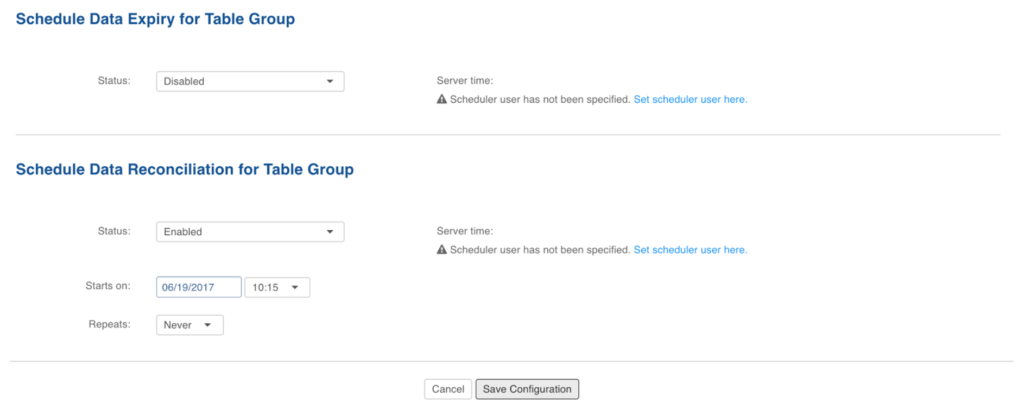

Reconciliation job can be triggered in the following two ways:

- Using UI Hook: This hook is available in the maintenance options of a table group, as shown below.

- Using a scheduler: You can schedule a reconciliation job. This feature is very useful for periodic reconciliation, which reconcile for last day, last 7 days and last 30 days You can schedule reconcile job at a table group level, as shown below.

Running Reconciliation Job

Once the reconciliation job is triggered, the queries and corresponding results can be seen in the summary logs of the ingestion logs page, as shown below. Figure: Running Reconciliation Job

Generating Reconciliation Metrics

There are two types of reconciliation metrics that you can generate:

- Table Reconciliation Metrics

- Table Group Reconciliation Metrics

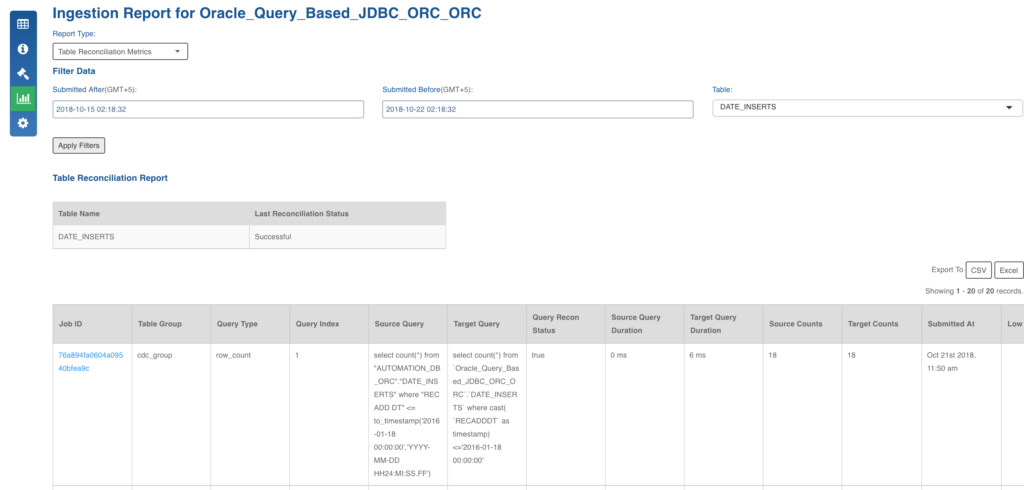

Table Reconciliation Metrics

Following are the steps to, generate the table reconciliation metrics of a given source:

- Click the Sources menu and select the required source.

- Click Ingestion Reports icon.

- In the Report Type drop-down, select Table Reconciliation Metrics.

- From the Table drop-down, select the required table.

- Choose the Submitted After and Submitted Before and click Apply Filters. The Table Reconciliation Report is displayed.

The following table lists the columns in the Table Reconciliation Report and their descriptions.

| Column | Description |

|---|---|

| Job ID | Unique identifier for a job. |

| Table Group | The table group name in which the required table is present. |

| Query Type | Identifies whether it is a Row Count or Aggregation query type. |

| Query Index | Order of execution of query in a table. |

| Source Query | Query that runs on RDBMS or Source system. |

| Target Query | Query that runs on Hive. |

| Query Recon Status | The status of each reconciliation query configured by the user. |

| Source Query Duration | Duration of source query execution. |

| Target Query Duration | Duration of target query execution. |

| Source Counts | Number of rows in the source table. |

| Target Counts | Number of rows in the target table. |

| Submitted At | The time and date when the job was submitted. |

| Low Watermark | The time of the first build. |

| High Watermark | The time at which the last incremental load completed. |

The Last Reconciliation Status of the table is also maintained which includes the reconciliation of all user-configured queries.

Table Group Reconciliation Metrics

The table group reconciliation metrics shows the details for a selected table group. The columns in the report are same as those of table reconciliation metrics.

The Last Reconciliation Status of the tablegroup is also maintained which includes the reconciliation of all user-configured queries.

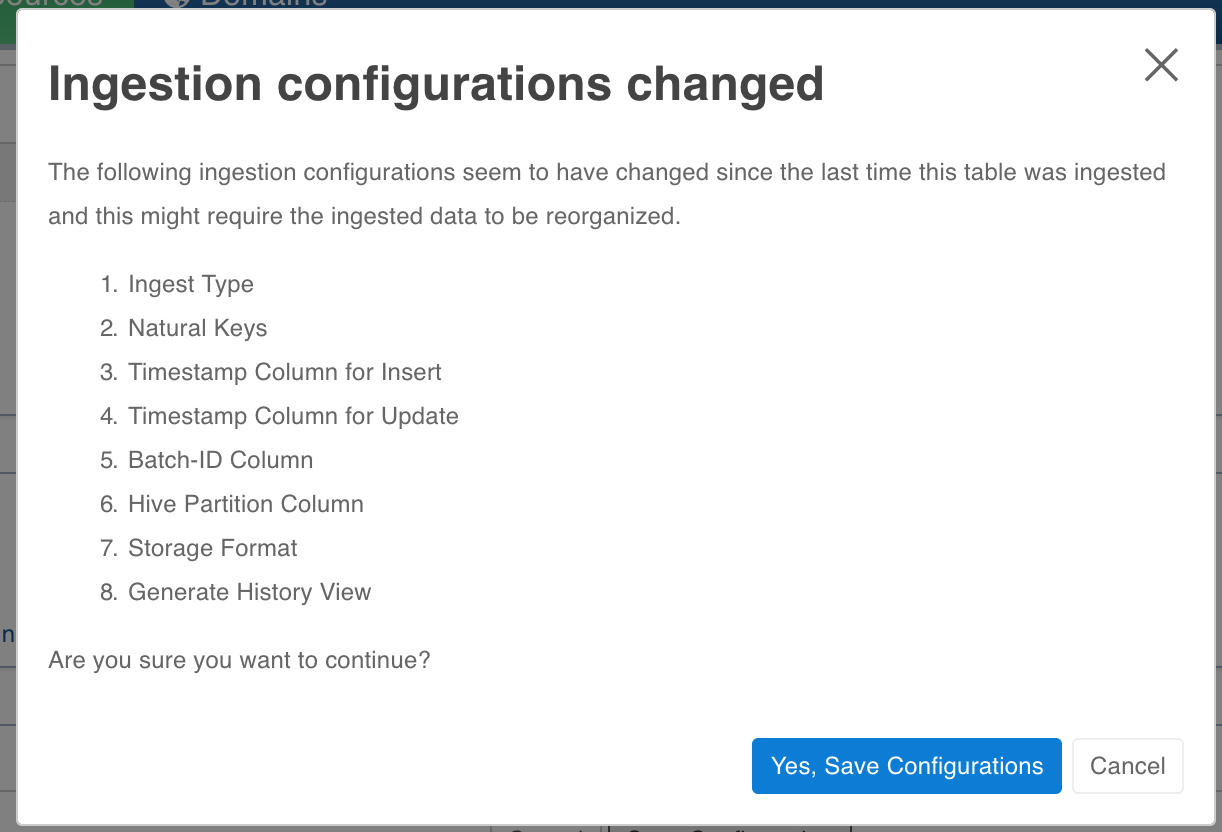

Data Reorganization and Table Configuration Management

To avoid some of the situations where user has to change a particular table level configuration and is forced to truncate reload the table.

Configurations which required a truncate reload before this feature.

- natural_key

- split_by_key

- external_audit_schema

- external_audit_table

- external_audit_join_column

- external_audit_timestamp_column

- captured_schema_name

- captured_instance_name

- storage_format

- partition_key

- number_of_secondary_partitions

- generate_history_view

- hive_table_name

- split_by_key_extract_from_col

- split_by_key_derive_function

- split_by_key_derive_column

- partition_key_extract_from_col

- partition_key_derive_column

- partition_key_derive_function

- is_infoworks_managed_table

Changes in the above configurations will be supported with some caveats in the following configuration:

hive table name: Hive table name change is supported in file based sources only, its not supported in RDBMS sources.

Changing the Configurations

The user interface for changing configurations remains the same. The difference is in what the configurations represent.

If the configurations are changed and the changed configurations require a restructuring job to run then on save UI will inform the user about the changed configurations which need a reorganizing job.

If user chooses to save the configs, he will be warned that a restructuring is required. User can run restructuring by clicking on Reorganize Now. The changes wont be saved if user clicks on cancel.

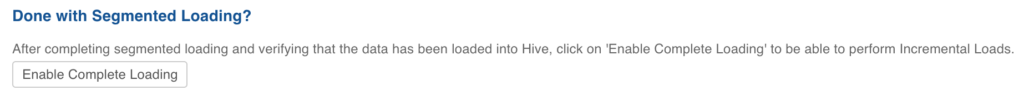

Segmented Load

For segmented load, the configuration changes are not supported. Currently it is not blocked from the user interface though. Hence all the configurations which are mentioned at the start of the document (the one that required truncate reload to change) should not be changed until all segments are crawled and you click Enable Complete Loading.

Configurations

- restructure_job_map_mem_mb- mapper memory for the crawl map reduce. Default is the value of iw_jobs_default_mr_map_mem_mb in conf.properties.

- restructure_job_red_mem_mb- reducer memory for the crawl map reduce. Default is the value of iw_jobs_default_mr_red_mem_mb in conf.properties.

Limitations

For tables that have been crawled already, changing the sync type to log based or query based is not supported. You must truncate the table and recrawl with the new configuration.

Cloudera Impala Support

The Impala support in Ingestion can be enabled on Cloudera distribution by setting Admin System Configuration cdh_impala_support to true. This allows the Ingestion Job to create tables over data in HDFS which can be queried through Impala or other applications.

Impala-Related Admin System Configuration

- cdh_impala_support: As explained above, you must set this configuration to true.

- fail_ingestion_on_impala_cache_refresh_failure: After successful completion of Ingestion job, the metadata cache is invalidated so that new files and tables are visible to Impala.

- When this flag is set to true, the job status is set to failed.

- For performance reasons, this flag should be set to false. The tables which were not invalidated are provided in Job summary and can be invalidated manually by running invalidate metadata {tableName} if the table is newly created or refresh {tableName} if the table already existed.

- impala_ingestion_validation: When true, the ingestion job will use Impala query engine to do validation after job. Otherwise, hive will be used.

Impala Support Known Issues

Ingestion known issues in case of Cloudera are dictated by Impala limitations in datatypes supported and compatibility with other tools like Hive.

- Impala does not support some of the datatypes: One of the datatypes not supported is Date. However, our Ingestion jobs convert Date datatype to Timestamp while ingesting values, so that you can ingest sources with schema containing Date. For more details, see Impala Datatypes.

- Impala Hive Timezone mismatch: For data files written by Hive, there can be inconsistency in timestamps when queried in Impala. This is because, Hive stores the timestamp values in local timezone. For compatibility without any workarounds, add -convert_legacy_hive_parquet_utc_timestamps=true as startup flag for impalad. Otherwise, while querying in Impala use from_utc_timestamp function to retreive the values. An example to get values in EDT: select from_utc_timestamp('recordTimestampColName',EDT') from table_name; For more details, see Timestamp Datatypes.

- Impala Datatypes for Partitioning: For more details, see Partition Key Columns.

NOTES:

- Impala support in Ingestion has been tested on Cloudera distribution 5.10.x.

- Impala support requires Parquet as storage_format.

- 3 boolean constants:

| Admin Configuration | Description |

|---|---|

| cdh_impala_support | Set to true if Impala support is required. |

| fail_ingestion_on_impala_cache_refresh_failure | Set to true if the ingestion job is to be failed in case Impala cache metadata is not refreshed. |

| impala_ingestion_validation | When true, the ingestion job will use Impala query engine to do validation after the job. Otherwise, hive will be used. |

Source Configuration Migration

Exporting Source Configuration

To export source configuration, follow these steps:

- Click Sources on the top menu.

- Click the required source for which you want to export the configuration.

- Click Settings icon.

- On the Source Settings page, scroll down to locate Configuration Migration section as shown below.

- Click Export. The source configuration gets downloaded.

NOTE: The export contains configurations for the source, and all the tables and table groups.

Importing Source Configuration

Following are the steps to import source configuration:

- Click Sources on the top menu.

- Click the required source for which you want to export the configuration.

- Click Settings icon.

- On the Source Settings page, scroll down to locate Configuration Migration section.

- Click Choose A File and select the configuration file that you downloaded while exporting.

- Use the checkboxes to select the tables that you want to import configurations for.

- Click Import Configuration.

NOTE: If the configuration for a table or a table group cannot be imported, the errors will be displayed and clicking on it will explain the error.

Table names in the source where the configuration is being imported must exactly match the exported configuration.

Passwords (such as under table export configuration) do not get exported. You must navigate to the table configuration and enter the password. If the configuration is being imported after the password was entered in this source, the password will not be removed.

Data Source Connectors

Infoworks data source connectors provide data ingestion and data synchronization capabilities for specific data source types.

A data source type refers to the unique combination of a specific brand of data source and the specified access protocol, platform or format of the data source.

A data source connector can be used to access unlimited, separate instances of that data source type. For example, a single data source connector for Oracle can be used to access unlimited number of Oracle databases or schemas.

Following are examples of the Infoworks data source connector types:

- Oracle Database

- Teradata Data Warehouse

- IBM DB/2 for Linux

- IBM DB/2 for z/OS

- Delimited Files

- XML Files

- JSON Files

A complete list of the currently available data source connectors can be provided by Infoworks.

For more details, refer to our Knowledge Base and Best Practices!

For help, contact our support team!

(C) 2015-2022 Infoworks.io, Inc. and Confidential