DataFoundry 2.7.2

2.7.2

Installation

Core Functionalities

Title

Message

Create new category

What is the title of your new category?

Edit page index title

What is the title of the page index?

Edit category

What is the new title of your category?

Edit link

What is the new title and URL of your link?

Infoworks Installation on Existing HDInsight with ESP

Copy Markdown

Open in ChatGPT

Open in Claude

This document includes the steps required to Install Infoworks on an existing HDInsight cluster with ESP.

Prerequisites

Following are the prerequisites to create HDInsight cluster with ESP:

- Azure Active Directory domain services must be already set up in the Azure portal with either custom or default domain and ready to use with Secured LDAP.

- If HDInsight cluster must be present in the different resource group. Ensure a Vnet is created in a new resource group and peered with Azure Active Directory Vnet vice-versa.

HDInsight Deployment

Create HDInsight HBase Cluster version 3.6 with ESP option selected. Ensure to have an associated Vnet and subnet. When creating the cluster, note the following:

- Cluster Name

- Cluster Ambari Login User Name

- Cluster Ambari Login Password

- LDAP Admin User Password (admin user when creating HDInsight cluster under the Security + Networking section.)

Installation

Following is the Infoworks installation procedure:

Adding Edge node

- Login to portal.azure.com in a web browser and enter the following url: https://portal.azure.com/#create/Microsoft.Template/uri/https%3A%2F%2Fraw.githubusercontent.com%2FInfoworks%2Fdeployments%2Fmaster%2Fazure%2Fhdinsight%2Fexistinghdinsight%2FmainTemplate.json

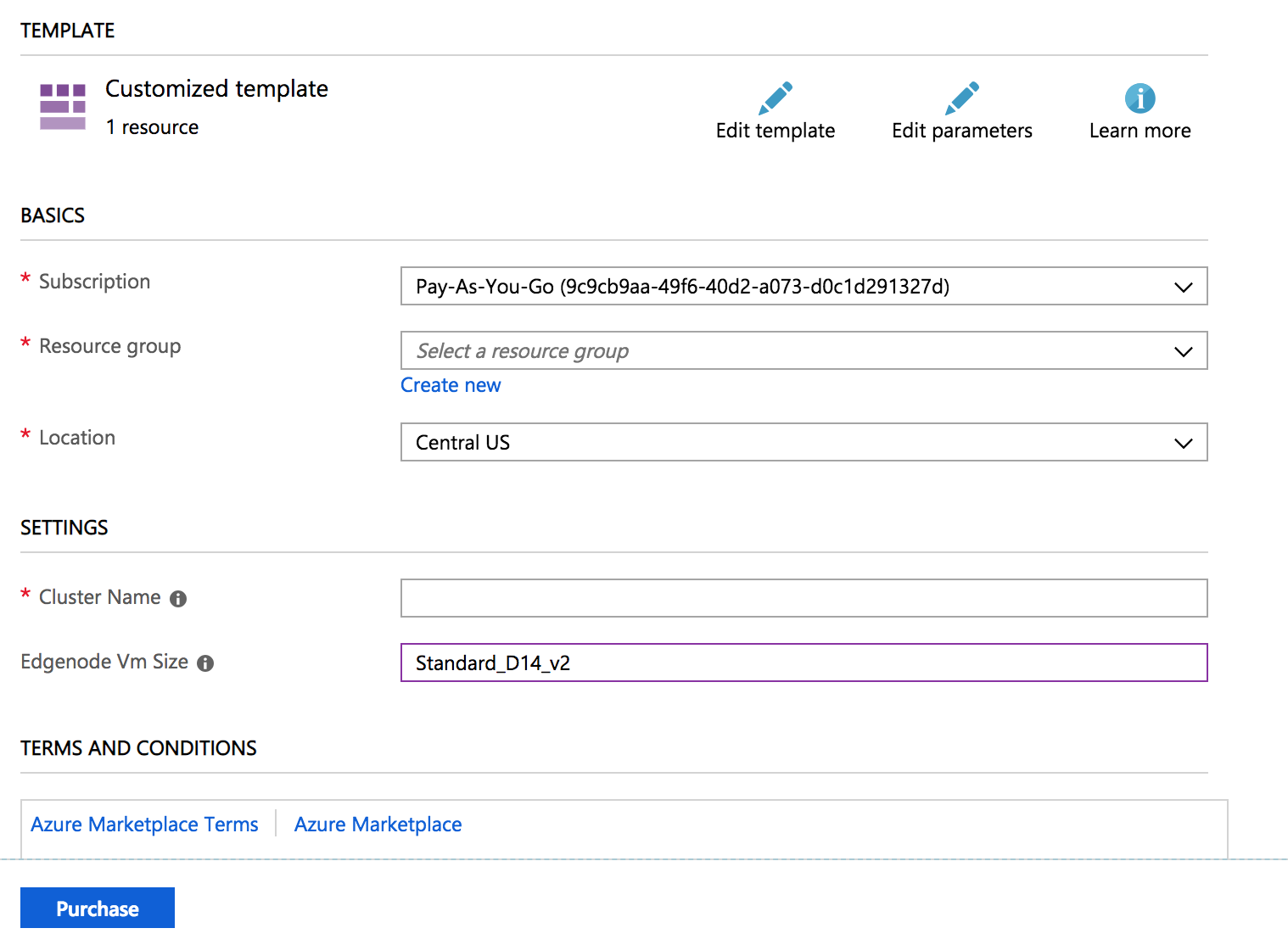

- The following interface will be displayed:

- Select the Resource group where the HDInsight cluster is located and provide the cluster name.

- Edgenode Vm Size must be populated in advance with Standard_D13_v2. Contact Infoworks support team to verify if the value must be modified, for example, Standard_D14_v2. This value depends on the usage of the system and possible workloads on the edge node.

- Once the values are entered, click Purchase and wait for the deployment to complete.

Configuring Spark 2 using Script Actions

- Login to https://portal.azure.com.

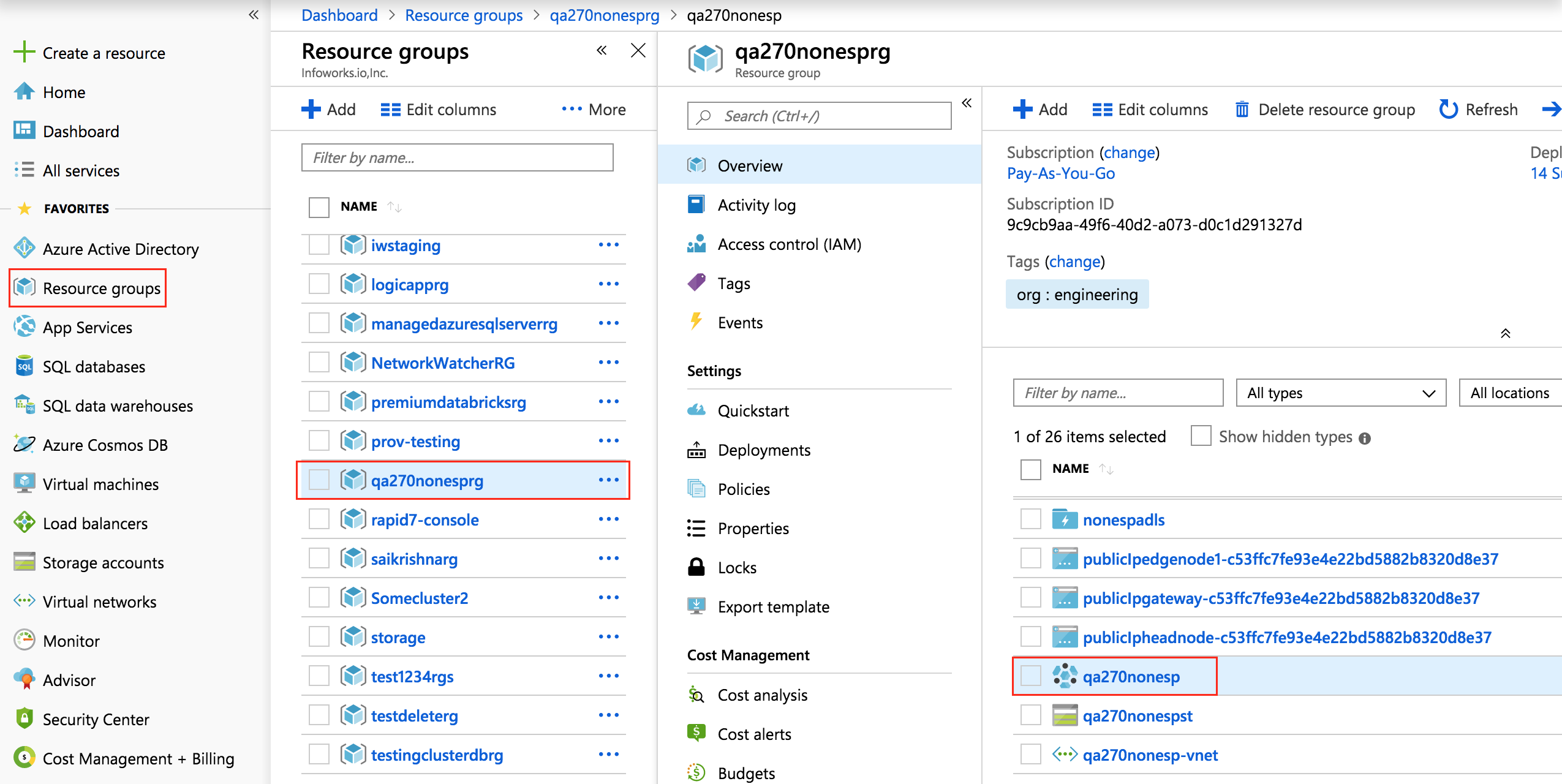

- Click the Resource Groups menu, select the resource group where the HDInsight cluster is located and select the respective HDInsight cluster.

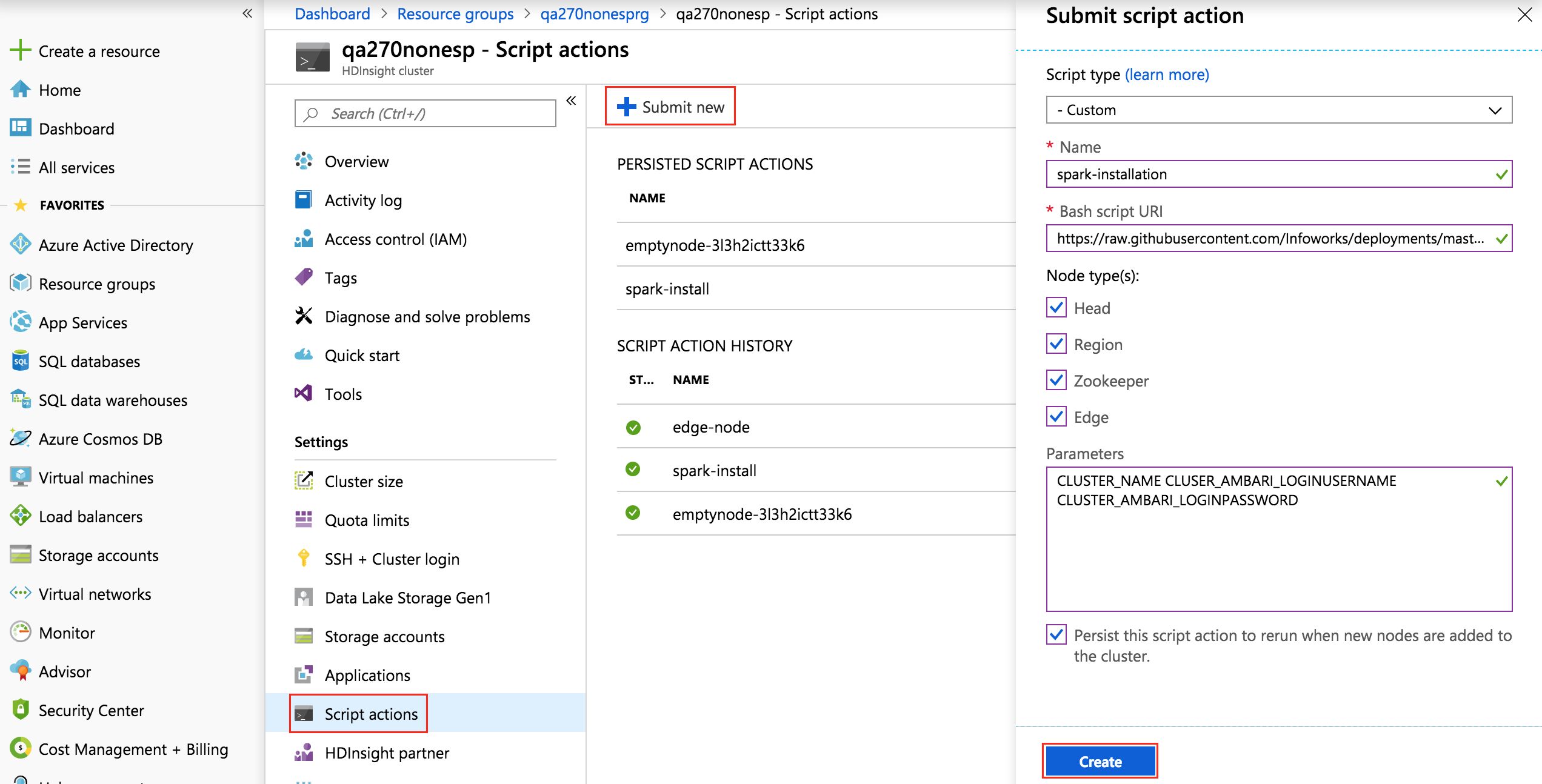

- Select the Script Actions menu and click Submit New to install Spark 2 on all the nodes.

Enter the following values:

- Script type: Select the type as Custom.

- Name: Enter a name, for example, spark-installation.

- Bash Script URI: Enter the following URI https://raw.githubusercontent.com/Infoworks/deployments/master/azure/hdinsight/existinghdinsight/spark-install.sh

- Node Types: Select All the nodes - Head, Region, Zookeeper and Edge. This option allows to run script actions on selected nodes.

- Parameters: Enter the following space separated parameters - CLUSTER_NAME CLUSER_AMBARI_LOGINUSERNAME CLUSTER_AMBARI_LOGINPASSWORD

- Check the the box to persist the script action to rerun the script action whenever a new node is added to the cluster.

- Click Create and wait for the deployment to finish.

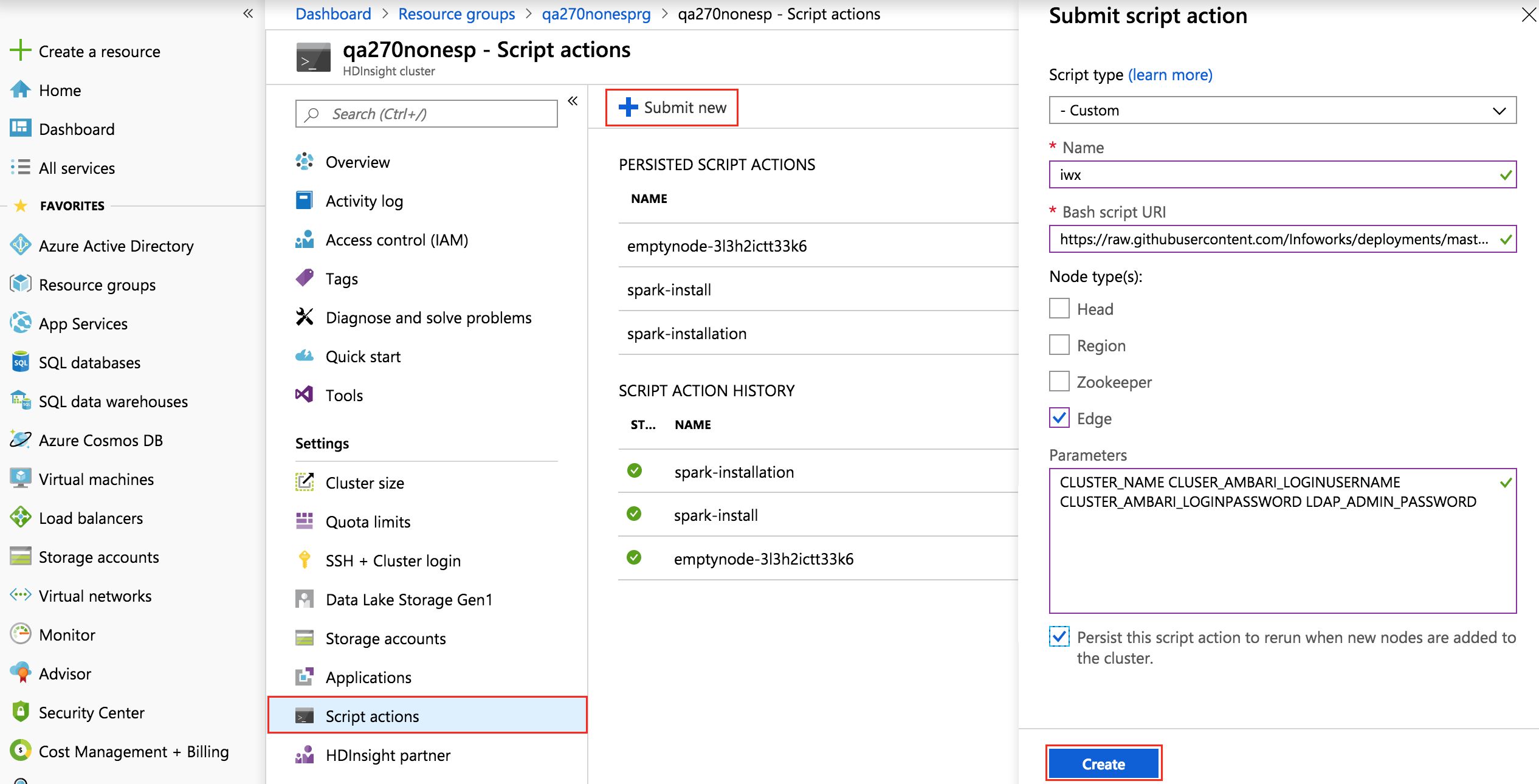

Installing Infoworks on Edge Node using Script Actions

- Login to https://portal.azure.com.

- Click the Resource Groups menu, select the resource group where the HDInsight cluster is located and select the respective HDInsight Cluster.

- Select the Script Actions menu and click Submit New to install Infoworks on the edge node.

Enter the following:

- Script type: Select the value as Custom.

- Name: Enter a name, for example, iwx.

- Bash Script URI: Enter the following URI: https://raw.githubusercontent.com/Infoworks/deployments/master/azure/hdinsight/existinghdinsight/edgenode-init.sh

- Node Types: Select Edge node.

- Parameters: Enter the following space separated parameters: CLUSTER_NAME CLUSER_AMBARI_LOGINUSERNAME CLUSTER_AMBARI_LOGINPASSWORD LDAP_ADMIN_PASSWORD

- Check the the box to persist the script action to rerun the script action whenever a new node is added to the cluster.

- Click C reate and wait for the deployment to finish.

Manual Configurations

Set the following in the conf.properties file to enable Kerberos:

iw_security_kerberos_enabled=trueiw_security_kerberos_default_principal=LDAP_USER@<REALM>iw_security_kerberos_default_keytab_file=/home/LDAP_USER/LDAP_USER.keytabiw_security_kerberos_hiveserver_principal=hive/<HOSTNAME>@REALM;transportMode=http;httpPath=cliservice- Modify the Hive port from 10000 to 10001 in the hive property, for example, hive=hive2://hostname:10001

Hive Configurations

In the Ambari UI, select Hive service and select Configs and Advanced.

- Add the following property in custom Hive site: hive.security.authorization.sqlstd.confwhitelist.append=.*

- Set doAs, Run as end user instead of Hive user from false to true.

Type to search, ESC to discard

Type to search, ESC to discard

Type to search, ESC to discard

Last updated on

Next to read:

Ignite InstallationFor more details, refer to our Knowledge Base and Best Practices!

For help, contact our support team!

(C) 2015-2022 Infoworks.io, Inc. and Confidential

Discard Changes

Do you want to discard your current changes and overwrite with the template?

Archive Synced Block

Message

Create new Template

What is this template's title?

Delete Template

Message