Title

Create new category

Edit page index title

Edit category

Edit link

Throttling

Data copy is performed with parallel tasks which use network bandwidth. To restrict the total amount of bytes transferred per second, Infoworks provides static and dynamic throttling. This allows you to specify the maximum bytes transferred per second for an individual task running in parallel with other tasks.

Static Throttling

For Hive batch and incremental replications, the static throttling limit can be specified in the Advanced Configurations section with key as BANDWIDTH and value as the amount of threshold data in megabytes. The job loads the static throttling value when the job starts and then uses this value until the job ends. To change the value while the job is in execution, see the Dynamic Throttling section.

For HDFS transfer, this configuration can be set by adding the following property to file_transfer.xml file:

xxxxxxxxxx<property><name>infoworks.replicator.map.bandwidth.mb</name><value>100</value></property>Dynamic Throttling

Dynamic throttling allows you to change the throttling parameters while the job is running.

NOTE: This works only if the zookeeper connection string is configured at the start of the job.

Following are the steps to perform dynamic throttling:

- Set the following property in the mr.conf file during batch and incremental replication:

zookeeper.connection.string=ip-172-30-1-100.ec2.internal:2181

The value must be a connection string with a comma-separated list of host:port pairs, each corresponding to a zookeeper server.

- For HDFS replication, add the following property to the file_transfer.xml file:

xxxxxxxxxx<property> <name>zookeeper.connection.string</name><value>ip-172-30-1-100.ec2.internal:2181</value></property>NOTE: Ensure that the jobs and workflows are configured as specified above to change the throttling parameter during the jobs execution.

- Infoworks ships a script with the installation which either can be executed from command line or can be added in a workflow as a single Bash Script node and called from the UI. The workflow it is embedded in can also be scheduled.

Using Script from Command Line

The script is available in the <Infoworks_home>/bin/scripts/throttle.sh file.

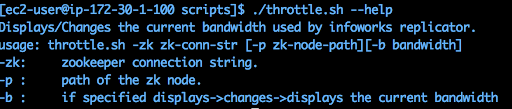

Use the following command for script usage:

./throttle.sh --help

For more details, refer to our Knowledge Base and Best Practices!

For help, contact our support team!

(C) 2015-2022 Infoworks.io, Inc. and Confidential